Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

Cost Management

Statsig’s pipelines leverage many SQL best practices in order to reduce cost, and smaller customers can run month-long analyses for a few pennies. Following these best practices will help keep costs under control and consistent. See Compute Cost Transparency to understand how much compute time your experiments use.Follow SQL Best Practices

Statsig uses your SQL to connect to your source data. Here’s some common issues:-

Avoid using

SELECT *, and only select the columns you’ll needSELECT *leads to a lack of clarity in what you are pulling/require for other users- For warehouse like Bigquery and Snowflake, it can increase the scan/size of materialized assets like CTEs in snowflake

- This leads to higher query runtime and higher warehouse bills

-

Filter to the data you will need in your base query

- This reduces the scan and amount of data required in future operations

- You can use Statsig Macros (below) to dynamically prune date partitions in subqueries

-

Group common metric groups into a single Metric Source

- Statsig will filter your source to the minimal set of data and then create materializations of experiment-tagged data. To maximize the effectiveness of this strategy, have metric sources that cover common suites of metrics that are usually pulled together.

-

Cluster/Partition tables to reduce query scope

- Most warehouses offer some form of partitioning or indexing along frequently filtered keys. In warehouses with clustering, we recommend the following strategy:

- Partition/Cluster Assignment Source tables by date, and then your experiment_id column so experiment-level queries can be scoped to just that experiment

- Partition/Cluster Metric Source tables based on date, and then the fields you expect to use for filters

- Most warehouses offer some form of partitioning or indexing along frequently filtered keys. In warehouses with clustering, we recommend the following strategy:

Materialize Tables/Views

Since Statsig offers a flexible and robust metric creation flow, it’s common for people to write joins or other complex queries in a metric source. As tables and experimental velocity scale, these joins can become expensive since they will be run across every experiment analysis. To reduce the impact of this, best practice is to:- Materialize the results of the join and reference that in Statsig, so you only have to compute the join once

- Use Statsig macros to make sure partitions are pruned before the join, and you only join the data you need

Use Incremental Reloads

Statsig offers both Full and Incremental reloads. Incremental reloads will only process new data, and can be significantly cheaper on long-running experiments. A number of advanced settings in the pulse advanced settings section (and available at an org-level default) will help you make tradeoffs to reduce the total compute cost. For example “Only calculate the latest day of experiment results” skips timeseries, but can run large full reloads (e.g. 1-year on 100M users) in 5 minutes on a Large snowflake cluster.Reload Data Ad-Hoc

Depending on your warehouse and data size, Statsig Pulse results can be available in as little as 45 seconds. Since there’s flat costs to pipelines, reloading 5 days is not 5 times as expensive as loading one day. If cost is a high concern, being judicious about which results you schedule vs. load on-demand can significantly reduce the amount of processing your warehouse has to do.Leverage Statsig’s Advanced Options

By default, Statsig runs a thorough analysis including historical timeseries and context on your results.Utilize Metric Level Reloads

Statsig offers Metric-level reloads; this allows you to add a new metric to an experiment and get its entire history, or restate a single metric after its definition has changed. This is cheaper than a full reload for experiments with many metrics, and is an easy way to check guardrails or analyze follow-up questions post-hoc.Use Statsig’s Macros

In Metric and Assignment sources, you can use Statsig Macros to directly inject a DATE() type which will be relative to the experiment period being loaded.{statsig_start_date}{statsig_end_date}

2023-09-01 to 2023-09-03, this query:

Avoid Contention

Resource contention is a common problem for Data Engineering teams. Usually, there will be large runs in the morning to calculate the previous day’s data or reload tables. On warehouses that have flat resources or scaling limits, Pulse queries can be significantly slower during these windows, and additionally will slow down core business logic pipelines. The best practice is to assign a scoped resource to Statsig’s service user. This has a few advantages:- Costs are easy to understand, since anything billed to that resource is attributable to Statsig

- You can control the max spend by controlling the size of the resource, and independently scale the resource as your experimentation velocity increases

- Statsig jobs will not effect your production jobs, and vice versa

- Schedule your Statsig runs after your main runs - this also ensures the data in your experiment analysis is fresh

- Use API triggers to launch Statsig analyses after the main run is finished

Analytics Optimization

When using Statsig’s Metric Explorer to visualize the data within your warehouse, optimizing table layout and clustering configurations can greatly improve latency. This section serves to describe a set of best practices you can employ to improve the performance of analytics queries. Here are recommendations for some of the most commonly used warehouses.BigQuery

Table Layout - Partitioning & Clustering

We advise partitioning on event date and clustering on event when defining your events table. This will improve performance as the majority of queries will filter for the name of the event and the time it was logged. When defining the partition on event date, you should truncate the timestamp to day-level granularity instead of using the raw timestamp (which would otherwise have millisecond precision resulting in very high cardinality).Databricks

(Preferred) Use Liquid Clustering

When making clustering decisions in your events table layout, liquid clustering provides a flexible approach that allows you to modify your clustering keys without needing to manually rewrite existing data. We recommend employing liquid clustering for your events table with the following:OPTIMIZE jobs every 1-2 hours. This will incrementally apply liquid clustering to your table.

(Alternative) Partitioning and ZORDER

If you choose not to use liquid partitioning, our recommendation is to partition on a single low cardinality column such as the date of the event. Try to avoid adding more than one column on the partition unless absolutely necessary and don’t partition on a column that has a cardinality that would exceed one thousand. We suggest using a generated column to simplify pruning:ZORDER to colocate similar values within a file for a high cardinality column, which benefits query performance by improving data skipping. We recommend doing this on the event column:

OPTIMIZE on the last week’s event data, you can improve query performance by compacting the number of small data files into fewer, larger files:

ZORDER on the event.

If extra parallelism is desired without introducing too many partitions, you can ZORDER by an additional bucket column. This bucket column can be defined as follows:

Partition Pruning

Dynamic File Pruning will enable Spark to prune partitions based on filter values at runtime. It should be enabled if possible using:Handling Skew and Joins

To rebalance skew and adjust join strategy, we advise turning on Adaptive Query Execution:Compute Choices

The Photon query engine allows for faster execution of queries with more efficient use of CPU and memory. If possible, it should be enabled for your compute cluster or SQL warehouse.Redshift

Table Design - Distribution Style and Sort Key

As Redshift does not support partitioning by column, we can instead employ use of a sort key. Given most event tables are generally append-only and time-based, we advise use of a compound sort key on (timestamp, event) so that the data is ordered by time. Given all analytics queries will filter on timestamp, this should allow for queries to read only the relevant blocks. Ordering secondarily by event will mean rows are grouped by event within each time block, which can reduce scan size when filtering by event. We want pruning to be primarily upon timestamp, which is why it is kept as the first key. Given there can be significant skew among event types, we generally advise using an automatic distribution style rather than distributing upon the event column. This will allow Redshift to make distribution decisions based on the size of the table and query patterns. You can create an events table with the above recommendations in mind as follows:Snowflake

Clustering Keys

Given Snowflake does not allow for explicit partitioning, we recommend using clustering keys on the event date and event to improve performance as the majority of queries will filter for the name of the event and the time it was logged. Note that we advise clustering on the timestamp truncated to day-level granularity instead of the raw timestamp (which would otherwise have millisecond precision causing very high cardinality).Managing High Cardinality Columns

If there are high cardinality columns that you frequently reference in Metrics Explorer filters, consider using search optimization rather than clustering by it. This avoids an overly large range of values for each micro-partition generated by Snowflake, which would make pruning inefficient. For high cardinality columns, we can instead rely on the auxiliary search index generated through search optimization.Monitoring Clustering

You can useSYSTEM$CLUSTERING_INFORMATION to check if your current clustering scheme is effective. Large values of average_depth and average_overlaps may indicate the existing table should be reclustered on different keys with lower cardinality.

Timestamp Column

If possible, use theTIMESTAMP_NTZ (no timezone) type for your timestamp column. This saves on space as the offset metadata will not need to be stored. It additionally can help speed up queries as it allows Snowflake to avoid the extra work for timezone normalization internally, which would otherwise take place during filtering and pruning of micro-partitions.

Questions?

If you need additional support in optimizing your warehouse configuration for analytics, please reach out in the Slack support channel for your organization within Statsig Connect.Debugging

Statsig shows you all the SQL being run, and any errors that occur. Generally these are caused by changing underlying tables or Metric Sources, causing a metric query to fail. Here’s some best practices for debugging Statsig Queries.Use the Queries from your Statsig console

If a pulse load fails, you can find all of the SQL queries and associated error messages in the Diagnostics tab. You can easily click the copy button to run/debug the query within your warehouse. Look out for common errors:- A query attempting to access a field that no longer exists on a table

- A table not existing - usually due to the Statsig user not having permission on a new table

Turbo Mode

Turbo Mode skips some enrichment calculations (in particular some time series rollups) in order to very cheaply compute the latest snapshot of your data. With this, customers have run experiments on 150+ million users in less than 5 minutes on a snowflake S cluster.Ask!

Statsig’s support is very responsive, and will be happy to help you fix your issue and build tools to prevent it in the future - whether it’s due to system or user error.Compute Cost Transparency

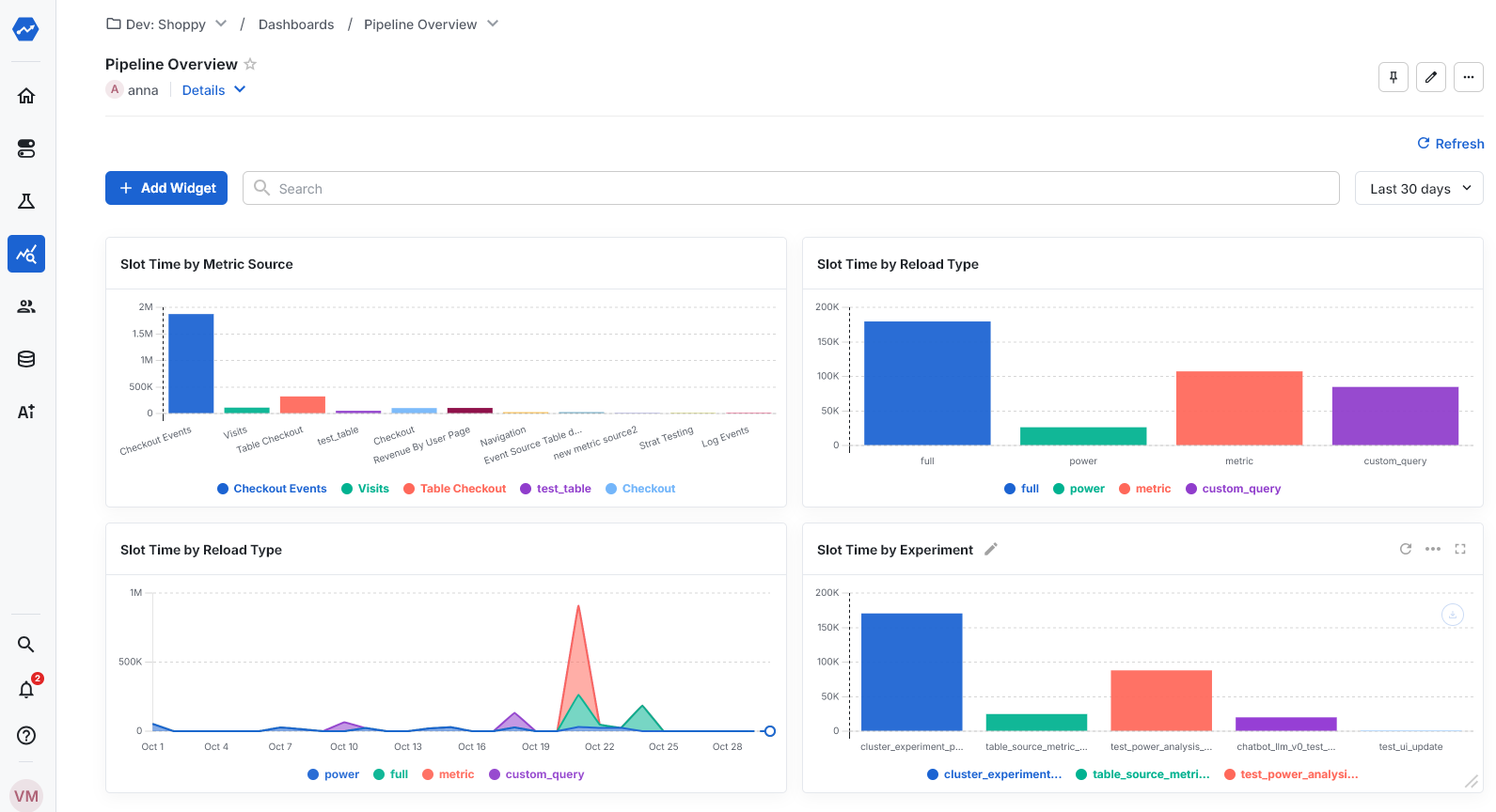

Statsig Warehouse Native now lets you get a birds eye view across the compute time experiment analysis incurs in your warehouse. Break this down by experiment, metric source or type of query to find what to optimize. Common customers we’ve designed the dashboard to be able to address include What Metric Sources take the most compute time (useful to focus optimization effort here; see tips here) What is the split of compute time between full loads vs incremental loads vs custom queries? How is compute time distributed across experiments? (useful to make sure value realized and compute costs incurred are roughly aligned) You can find this dashboard in the Left Nav under Analytics -> Dashboards -> Pipeline Overview

pipeline_overview table for each job executed as part of that run. This table has the following schema:

| Column | Type | Description |

|---|---|---|

| ts | timestamp | Timestamp at which the DAG was created. |

| job_type | string | Job type (see Pipeline Overview) |

| metric_source_id | string | Only applicable for ‘Unit-Day Calculations’ jobs - the ID of the metric source |

| assignment_source_id | string | The Assignment Source ID of the experiment for which Pulse was loaded. |

| job_status | string | The final state of the job (fail or success) |

| metrics | string | Metrics processed by the job |

| dag_state | string | Final state of the DAG (success, partial_failure, or failure) |

| dag_type | string | Type of DAG (full, incremental, metric, power, custom_query, autotune, assignment_source, stratified_sampling) |

| experiment_id | string | ID of the experiment for which Pulse was loaded, if applicable |

| dag_start_ds | string | Start of the date range being loaded |

| dag_end_ds | string | End of the date range being loaded |

| wall_time | number | Total time elapsed between DAG start and finish, in milliseconds |

| turbo_mode | boolean | Whether the DAG was run in Turbo Mode |

| dag_id | string | Internal identifier for the DAG |

| dag_duration | number | Number of days in the date range being loaded |

| is_scheduled | boolean | Whether the DAG was triggered by a scheduled run |