Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

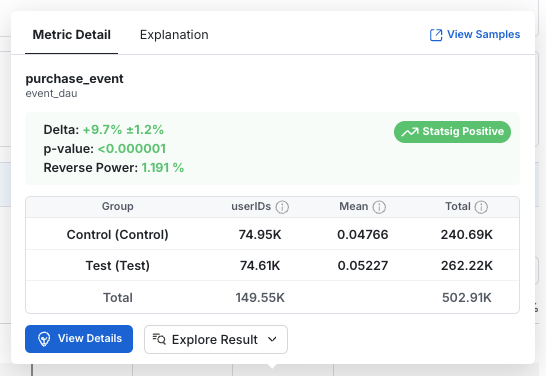

Tooltip Overview

A tooltip with key statistics and deeper information is shown if you hover over a metric in Pulse.

- Group: The name of the group of users. For Feature Gates, the “Pass” group is considered the test group while the “Fail” group is the control. In Experiments, these will be the variant names.

- Units: The number of distinct units included in the metric. E.g.: Distinct users for user_id experiments, devices for stable_id experiments, etc.

- Mean: The average per-unit value of the metric for each group.

- Total: The total metric value across all units in the group, over the time period of the analysis.

Calculation details

| Metric Type | Total Calculation | Mean | Units |

|---|---|---|---|

| event_count | Sum of events (99.9% winsorization) | Average events per user (99.9% winsorization) | All users |

| event_user | Sum of event DAU (distinct user-day pairs) | Average event_dau value per user per day. Note that we call this “Event Participation Rate” as this can be interpreted as the probability a user is DAU for that event. | All users |

| ratio | Overall ratio: sum(numerator values)/sum(denominator values) | Overall ratio | Participating users |

| sum | Total sum of values (99.9% winsorization) | Average value per user (99.9% winsorization) | All users |

| mean | Overall mean value | Overall mean value | Participating users |

| user: dau | sum of daily active users | Average metric value per user per day. The probability that a user is DAU | All users |

| user: wau, mau_28day | Not shown | Average metric value per user per day. The probability that a user is xAU | All users |

| user: new_dau, new_wau, new_mau_28day | Count of distinct users that are new xAU at some point in the experiment | Fraction of users that are new xAU | All users |

| user: retention metrics | Overall average retention rate | Overall average retention rate | Participating users |

| user: L7, L14, L28 | Not shown | Average L-ness value per user per day | All users |

p-Value

In Null Hypothesis Significance Tests, the p-value is the probability that such an extreme difference can arise by random chance when the experiment or test actually has no effect. A low p-value implies the observed difference is unlikely due to random chance. In hypothesis testing, a p-value threshold is used to determine which results are due to a real effect and which are plausibly due to random chance. (p-value calculation)Reverse Power

Reverse power is the smallest effect size that an experiment can reliably detect in its current state (some studies refer to this value as ex-post MDE). It is calculated from the sample size and standard error from the control group. Importantly, reverse power does not depend on the observed effect size. In practice, reverse power answers such questions like: given how the test actually played out, what is the smallest effect we have sufficient power (typically 80%) to detect? For a two-sided test, the reverse power for a given metric X is computed using the following equation: For a one-sided test, the reverse power for a given metric X is computed using the following equation:- is the mean metric value across control users

- is the population variance of delta

- are the observed number of units in the control group

- is the standard Z-score for the selected power. Typically = 0.8 and = 0.84

- and are the standard Z-scores for the selected significance level in a two-sided test and in a one-sided test.

Detailed View

Click on View Details to access in depth metric information. The detailed view contains three sections:- Time Series: How the metrics evolve over time

- Raw Date: Group level statistics

- Impact: How the experiment impacts the metric

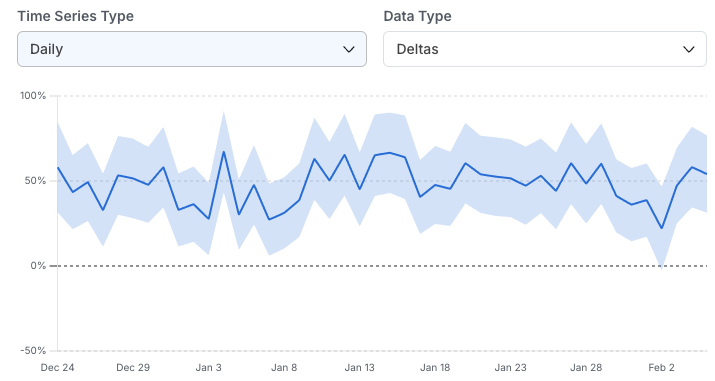

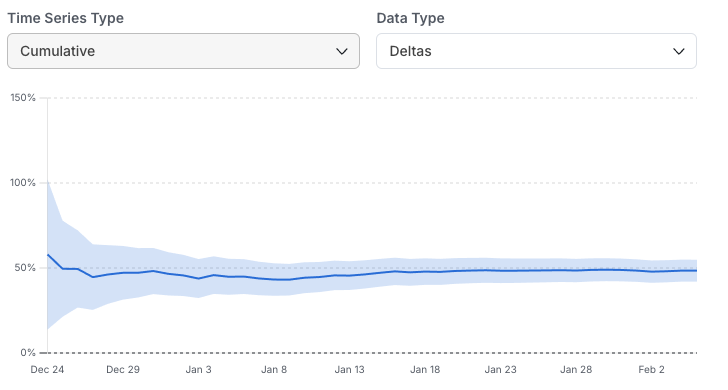

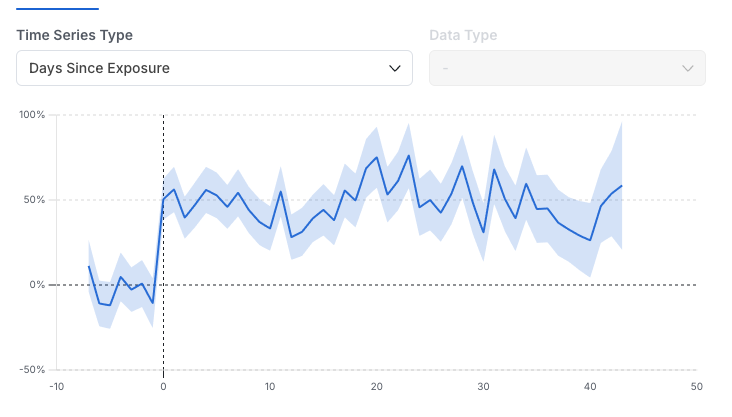

Time Series

In this view, select and drag as needed to zoom-in on different time ranges. Three types of time series are available in the drop-down: Daily: The metric impact on each calendar day without aggregating days together. This is useful for assessing the variability of the metric day-over-day and the impact of specific events. It’s the recommended time series view for Holdouts, since it highlights the impact over time as new features are launched.

Raw Data

This view shows the group level statistics needed to compute the metric deltas and confidence interval. Includes Units, Mean, and Total (explained above), as well as the Standard Error of the mean (Std Err). Details on the statistical calculations are available here.Impact

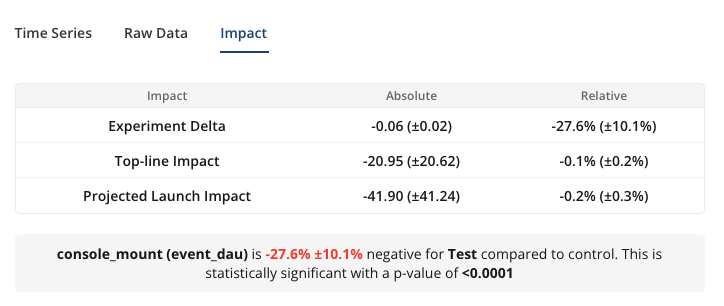

- Experiment Delta (absolute): The absolute difference of the Mean between test groups i.e. Test Mean - Control Mean. The p-value is shown to indicate whether the observed absolute difference is statistically significant.

- Experiment Delta (relative): Relative difference of the Mean i.e. 100% x (Test Mean – Control Mean) / Control Mean.

- Topline Impact: The measured effect that experiment is having on the overall topline metric each day, on average. Computed on a daily basis and averaged across days in the analysis window. The absolute value is the net daily increase or decrease in the metric, while the relative value is the daily percentage change.

- Projected Launch Impact: An estimate of the daily topline impact we expect to see if a decision is made and the test group is launched to all users. This takes into account the layer allocation and the size of the test group. Assumes the targeting gate (if there is one) remains the same after launch.