Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

What’s the problem with looking early in a “standard” A/B test?

Traditional A/B testing best practices (t-tests, z-tests, etc.) dictate that the readout of experiment metrics should occur only once, when the target sample size of the experiment has been reached (i.e. your design duration has been reached and you reach the desired sample size). We call this a “Fixed Horizon Test”, because when designing an experiment you set the amount of desired units you wish to observe and (ideally) commit to analyzing the results only once this dataset is complete. Continuous experiment monitoring (i.e. “peeking”) for the purpose of decision making, however, results in inflated false positive rates (a.k.a. the peeking problem) which can be much higher than that expected from your desired significance level.How does peeking increase decision error rates?

Continuous monitoring leads to inflated false positives because any time you consider ending an experiment early you are at risk of making a conclusion that is incorrect. Remember, at the core of a standard hypothesis test, you are deciding if you should “accept the null” hypothesis or “reject the null” hypothesis and accept the alternative. As an A/B practitioner, you must decide between rejecting or not rejecting the null. Any time you look early and allow the possibility of making a decision early, you are potentially rejecting the null hypothesis even when the null hypothesis is actually correct.Why would early results be “wrong”?

Metric values and p-values always fluctuate to some extent due to noise during any experiment, and results can transition into and out of statistical significance due to this noise, even when there is no real underlying effect. These noisy fluctuations can be caused by random unit assignment and random human behavior we can’t predict, and the effects can’t be entirely removed from an experiment. Not every test is subject to the same amount of experimental noise, however, and like so many things it’s dependent on what you’re testing and who your users are. Tests also vary over time in the amount of noise they see, especially as adding more users and observing them for longer tends to help the random fluctuations even out. Peeking, however well-intentioned, will always introduce some amount of selection bias if we adjust the date of a readout. When an experimenter makes any early decision about results (e.g. “is the result stat-sig, can we ship a variant early?”) they’ve increased the chances that their decision is based on a temporary snapshot of always fluctuating results. They are potentially cherry-picking a stat-sig result that might never be seen if the data were analyzed only once at the full, pre-determined completion of the experiment. Unfortunately, when running frequentist A/B test procedures this early decision can only increase the false positive rate (declaring an experimental effect when there really is none), even when the intention is to make a less-biased decision based on the statistics.What is Sequential Testing for an A/B test?

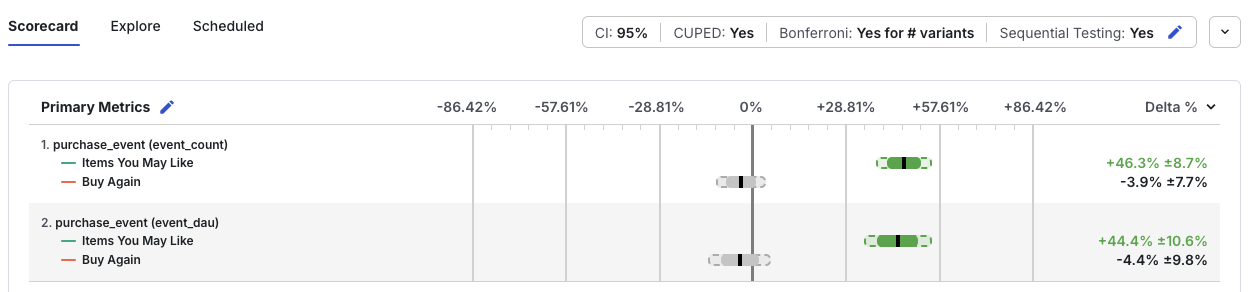

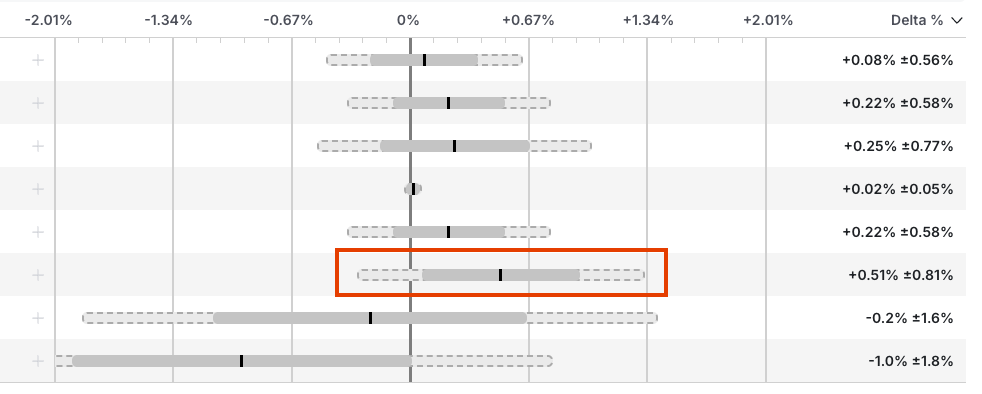

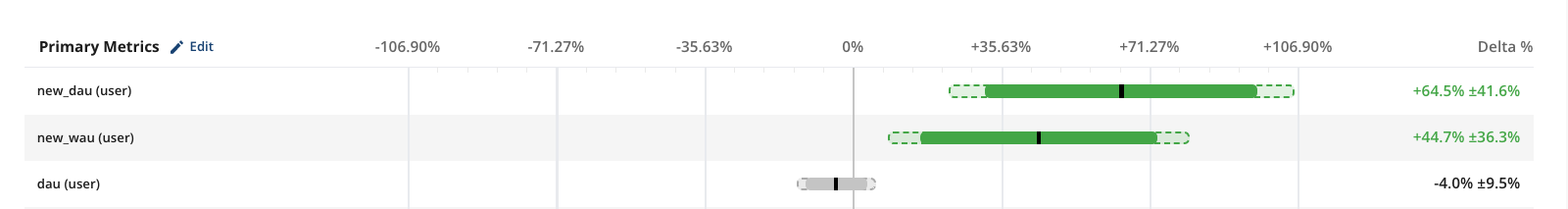

In the variety of Sequential Testing on Statsig, the experimental results for each preliminary analysis window are adjusted to compensate for the increased false positive rate associated with peeking. Statsig adjusts your p-values and confidence intervals automatically, and you can see this in the Results tab:

- Unexpected regressions: Sometimes experiments have bugs or unintended consequences that severely impact key metrics. Sequential testing helps identify these regressions early and distinguishes significant effects from random fluctuations.

- Opportunity cost: This arises when a significant loss may be incurred by delaying the experiment decision, such as launching a new feature ahead of a major event or fixing a bug. If sequential testing shows an improvement in the key metrics, an early decision could be made. But use caution: An early stat-sig result for certain metrics doesn’t guarantee sufficient power to detect regressions in other metrics. Limit this approach to cases where only a small number of metrics are relevant to the decision.

Quick Guides

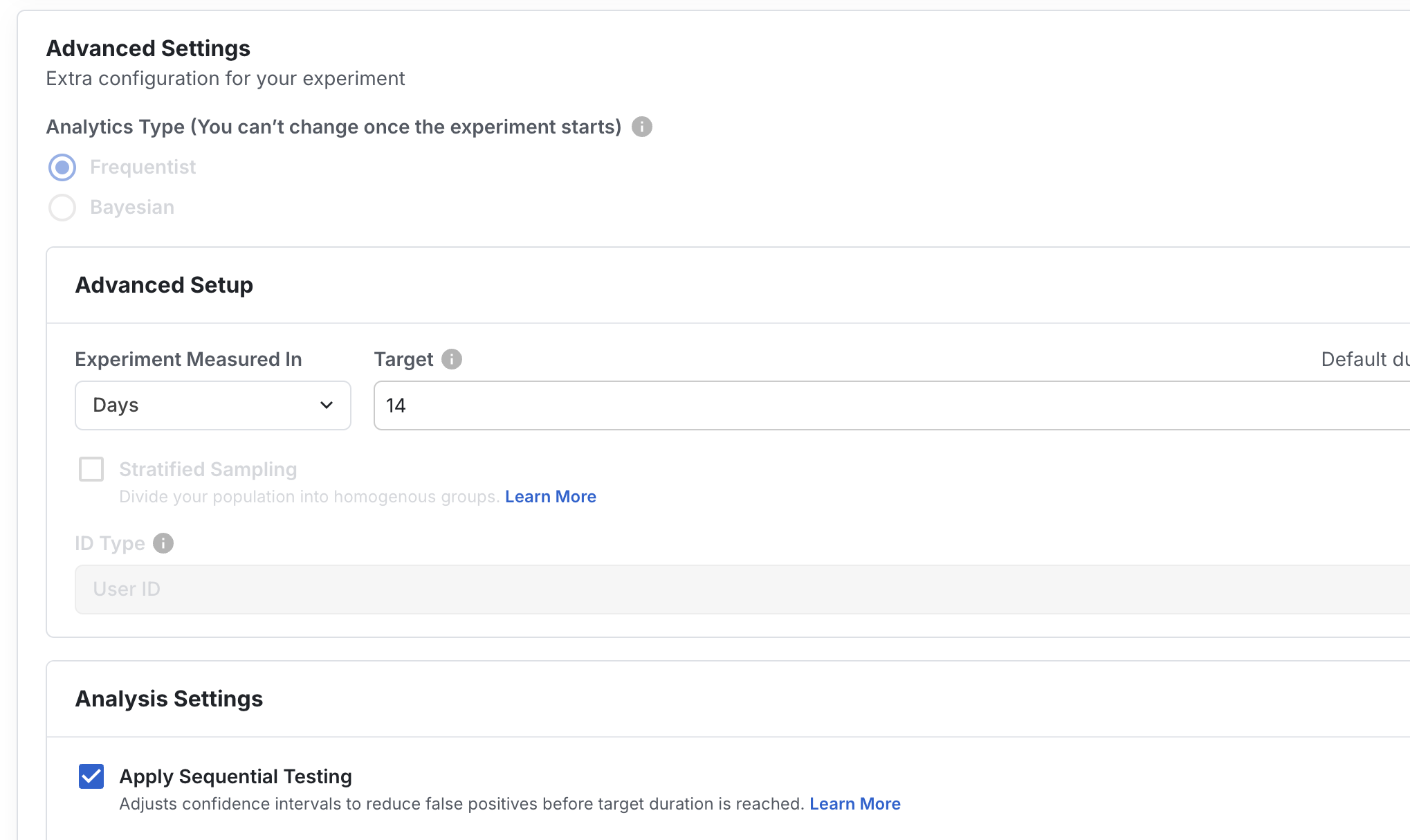

Enabling Sequential Testing Results

In the Setup tab of your experiment, with Frequentist selected as your Analytics Type, you can enable Sequential Testing under the Analysis Settings section. This setting can be toggled at any time during the life of the experiment, and it does not need to be enabled prior to the start of the experiment.

Interpreting Sequential Testing Results

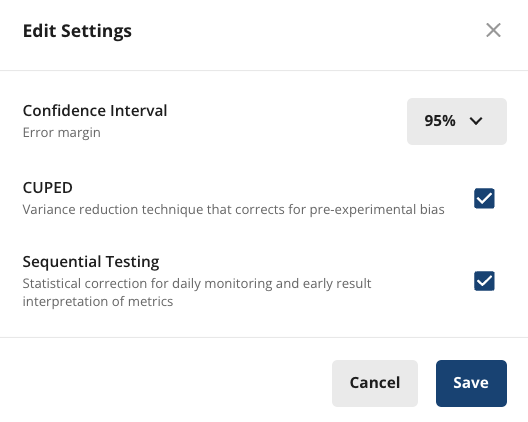

Click on Edit at the top of the metrics section in Pulse to toggle Sequential Testing on/off.

Statsig’s Implementation of Sequential Testing

Two-Sided Tests

Confidence Intervals

Statsig uses mSPRT based on the the approach proposed by Zhao et al. in this paper. The two-sided Sequential Testing confidence interval with significance level is given by: where- is the z-critical value, modified for sequential testing:

- is the standard variance of the delta of means when computing variance. It can be obtained from the sample variance of the test and control group means:

- is the mixing parameter given by:

- is the z-critical value used in the non-sequential test, for the desired significance level (1.96 for the standard )

p-Values

It’s possible to produce p-values for sequential testing that are consistent with the expanded confidence intervals above by modifying our p-value methods. We want to evaluate the mSPRT test so that our Type I error remains approximately equal to , and so that the sequential testing p-value is consistent with the expanded confidence interval. (I.e. A CI that includes 0.0% should have p-value ≥ , and one that excludes 0.0% should have p-value < .) Our observed z-statistic (i.e. z-score) remains unchanged. Instead of evaluating on a standard-normal distribution as we usually do, we evaluate against some other normal distribution with mean of zero and standard deviation . For a two-sided test, since we want the probability of an observed exceeding (assuming the null hypothesis to be true) to be limited to , we can find the unknown parameter by solving for : where is the inverse error function. From here we can compute the two-sided sequential testing p-value as: where is the observed z-statistic (i.e. z-score) as usual.One-Sided Tests

We can modify each step for one-sided sequential testing. where- is the one-sided test z-critical value, modified for sequential testing:

- is the the same as for two-sided tests.

- is the mixing parameter given by:

- is the one-sided z-critical value used in the non-sequential test, for the desired significance level (1.645 for the standard )

- is solved via:

- is the (signed) observed z-statistic as usual (i.e. z-score)