Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

This solution is still functional, but can be manual and time consuming to set up with minimal error handling. We encourage you to check out the Data Warehouse Ingestion solution instead.

Overview

The BigQuery integration allows you to export events and/or metrics from your BigQuery instance to Statsig. Here are the steps to take to enable BigQuery integration with Statsig:- Set up tables in your BigQuery instance.

- Give Statsig’s service account corresponding permissions on the tables.

- Enable the BigQuery integration in the Statsig console.

- Insert data into tables and mark data to be ready for import.

Set Up Tables in your BigQuery instance

- In your project, create a new dataset where tables for Statsig should live. You can use an existing dataset, but you will be giving the Statsig server user some permissions on this dataset later.

- Create a table for pre-computed metrics, and another for signalling when data has landed with the statement below:

Give permissions to Statsig’s service account

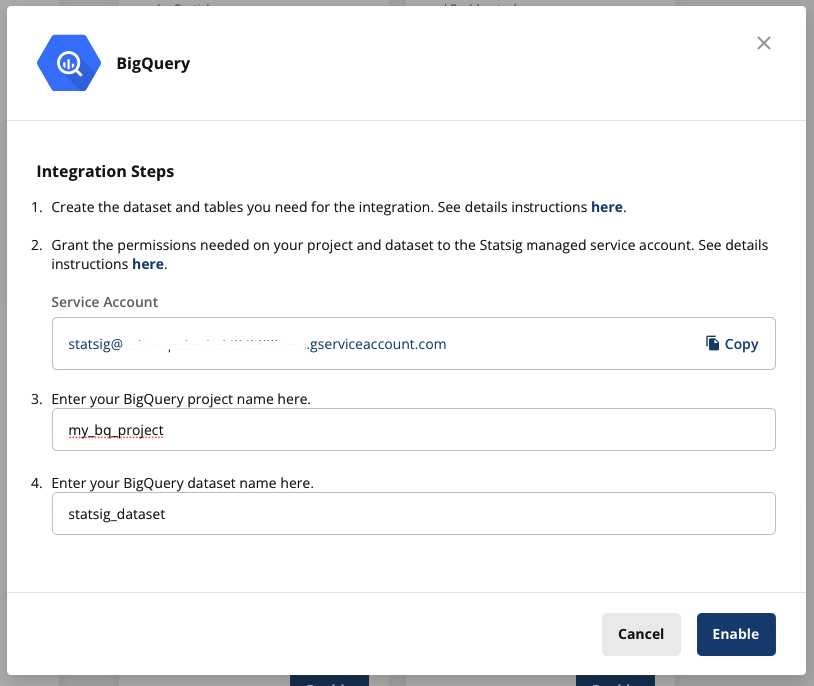

- In your Statsig console, navigate to Project Settings -> Integrations -> BigQuery. Here you will see the Statsig service account. Copy it to be used later.

-

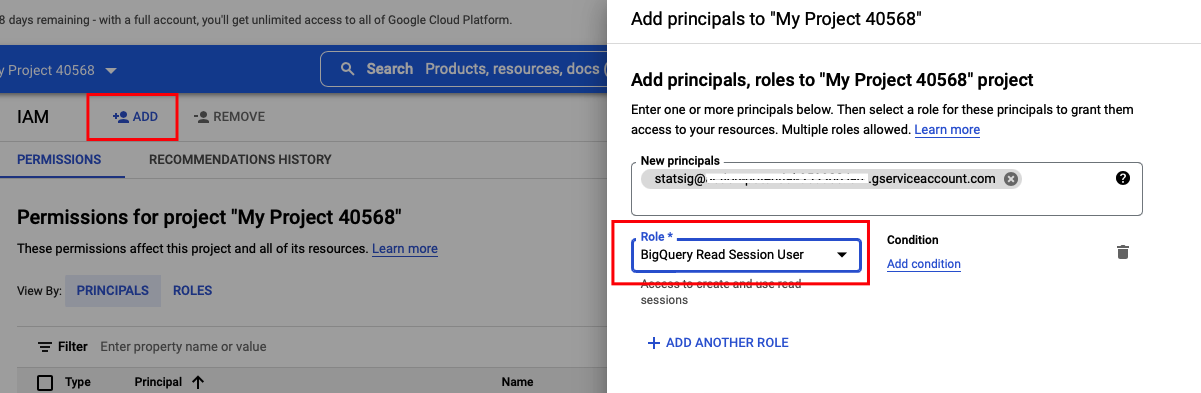

In your BigQuery’s IAM & Admin settings, add the Statsig service account you copied in step 1 as a new principal for your project, and give it “BigQuery Read Session User” role.

-

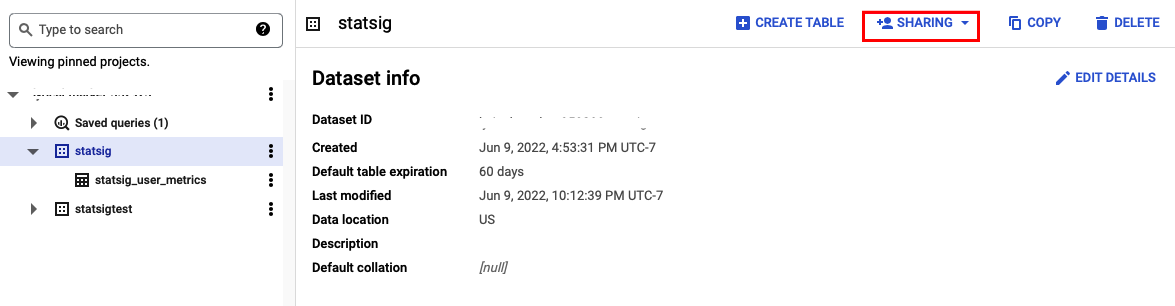

Navigate to your BigQuery SQL Workspace, choose the dataset, click on ”+ Sharing” -> “Permissions” -> “Add Principal” to give the same Statsig service account “BigQuery Data Viewer” role on the dataset.

-

Back to your Statsig console’s BigQuery integration dialog, and enter your BigQuery project and dataset name. Then click “Enable”.

Insert data for import, and signal when it is ready

To load data into statsig, you will load data intostatsig_user_metrics and then mark a day as completed in statsig_user_metrics_signal once all of the data for that day is loaded.

Your data should conform to these definitions and rules to avoid errors or delays:

| Column | Description | Rules |

|---|---|---|

| unit_id | The unique user identifier this metric is for. This might not necessarily be a user_id - it could be a custom_id of some kind | |

| id_type | The id_type the unit_id represents. | Must be a valid id_type. The default Statsig types are user_id/stable_id, but you may have generated custom id_types |

| date | Date of the daily metric | |

| timeuuid | A unique timeuuid for the event | This should be a timeuuid, but using a unique id will suffice. If not provided, the table defaults to generating a UUID. |

| metric_name | The name of the metric | Not null. Length < 128 characters |

| metric_value | A numeric value for the metric | Metric value, or both of numerator/denominator need to be provided for Statsig to process the metric. See details below |

| numerator | Numerator for metric calculation | See above, and details below |

| denominator | Denominator for metric calculation | See above, and details below |

Note on metric values

If you provide both a numerator and denominator value for any record of a metric, we’ll assume that this metric is a ratio metric; we’ll filter out users who do not have a denominator value from analysis, and recalculate the metric value ourselves via the numerator and denominator fields. If you only provide a metric_value, we’ll use the metric_value for analysis. In this case, we’ll impute 0 for users without a metric value in experiments.Scheduling

Because you may be streaming events to your tables or have multiple ETLs pointing to your metrics table, Statsig relies on you signalling that your metric/events for a given day are done. When a day is fully loaded, insert that date as a row in the appropriate signal_table -statsig_user_metrics_signal for metrics

or statsig_events_signal for events. For example, once all of your metrics data is loaded into statsig_user_metrics for 2022-04-01, you would insert 2022-04-01 into statsig_user_metrics_signal.

Statsig expects you to load data in order. For example, if you have loaded up to 2022-04-01 and signal that 2022-04-03 has landed,

we will wait for you to signal that 2022-04-02 has landed, and load that data before we ingest data from 2022-04-03

This ingestion pipeline is in beta, and does not currently support automatic

backfills or updates to data once it’s been ingested. Only signal these

tables are loaded after you’ve run data quality checks!

Checklist

These are common errors we’ve run into - please go through and make sure your setup is looking good!- The

id_typeis set correctly- Default types are

user_idorstable_id. If you have custom ids, make sure that the capitalization and spelling matches as these are case sensitive (you can find your custom ID types by going to your Project Settings in the Statsig console).

- Default types are

- Your ids match the format of ids logged from SDKs

- In some cases, your data warehouse may transform IDs. This may mean we can’t join your experiment or feature gate data to your metrics to calculate pulse or other reports. You can go to the Metrics page of your project and view the log stream to check the format of the ids being sent (either

User ID, or a custom ID inUser Properties) to confirm they match

- In some cases, your data warehouse may transform IDs. This may mean we can’t join your experiment or feature gate data to your metrics to calculate pulse or other reports. You can go to the Metrics page of your project and view the log stream to check the format of the ids being sent (either

- Monitoring is limited today, but you should be able to check your snowflake query history for the Statsig user to understand which data is being pulled, and if queries are not executing (no history) or are failing.

- You should see polling queries within a few hours of setting up your integration.

- If you have a signal date in the last 28d, you should see a select statement for data from the earliest signal date in that window

- If that query fails, try running it yourself to understand if there is a schema issue

- If data is loading, it’s likely we’re just processing. For new metrics, give it a day to catch. If data isn’t loaded after a day or two, please check in with us. The most common reason for metrics catalog failures is due to id_type mismatches.