In this guide, you will create and implement your first experiment in Statsig from end to end. There are many types of experiments you can set up in Statsig, but this guide will walk through the most common one: an A/B test. By the end of this tutorial, you will have:Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

- Created a new user-level Experiment in the Statsig console, with parameters set for a Control and Experiment group

- Checked the experiment in your application code using the Statsig Client SDK

getExperimentfunction

Prerequisites

- You already have a Statsig account

- You already installed the Statsig Client SDK in an existing application

Step 1: In the Statsig console

Create an experiment

- Log in to the Statsig console at https://console.statsig.com/ and navigate to Experiments in the left-hand navigation panel.

- Click on the Create button and enter the name

Quickstart Experimentand enter a brief hypothesis. For example, “A new homepage banner will improve engagement.” - Click Create.

Setup scorecards

Next, in the Experiment Setup tab, we will fill out scorecards for the metrics you are interested in watching. For the purposes of this SDK tutorial, we’ll leave most of the settings to default.- In the Hypothesis card, enter a hypothesis for what you are testing: “Showing a homepage banner will increase user engagement, measured by daily_stickiness.”

- In the Primary Metrics card, add a new metric called

daily_stickiness. - We will leave the Secondary Metrics and Duration settings to their default values. For more details on experiment setup, see the product docs on Creating an experiment.

Configure groups and parameters

Next, we’re going to configure the experiment. By default, the experiment is set up to run on 100% of users; 50% of those users are assigned to a Control group and 50% are assigned to an Experiment group.- In the Groups and Parameters card, create a parameter called

enable_bannerand select the type asBoolean. - Set

enable_bannertofalsefor the Control group andtruefor the Experiment group. - Click Save.

- Hit Save in the bottom right to finalize this experiment setup.

- This experiment is not live yet. To launch it, click Start at the top of the page. This will make production traffic eligible for the experiment.

Experiment parameters and groups cannot be configured after launching an experiment. If you want to test in a staging environment, check out our docs on using environments.

Step 2: In your application code

Check the experiment

This tutorial assumes you have already installed the Statsig Client SDK in an existing application.

enable_banner parameter that we had created earlier.

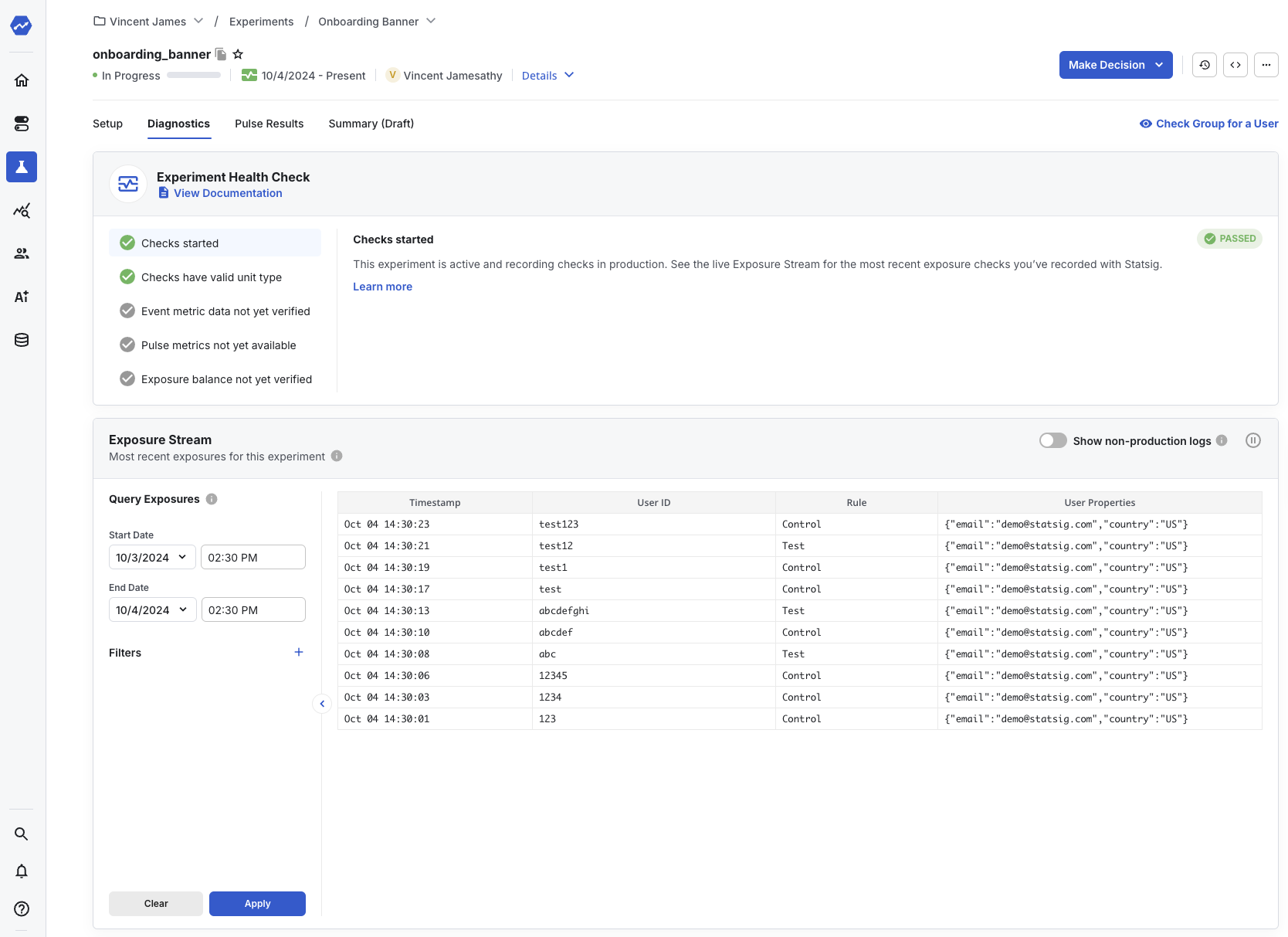

Step 3: Monitor experiment diagnostics

Now, if you run your code, theshowBanner() function is called for users in the Test group. If you navigate to your experiment in the Statsig console and select the Diagnostics tab, you can see a live log stream of checks and events related to this experiment from your application.

You can read more about the Diagnostics tab here.

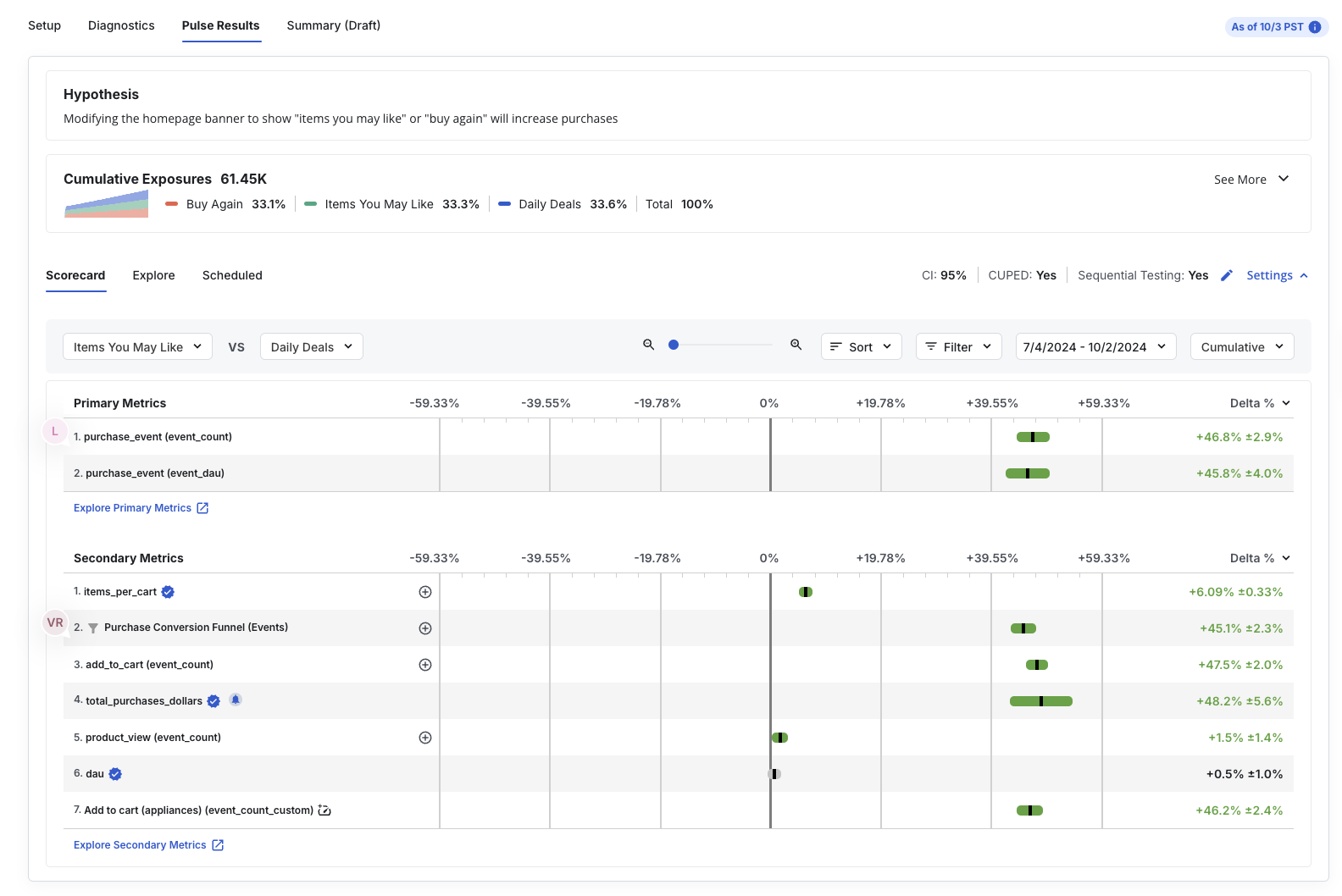

Step 4: Read experiment results

Within 24 hours of starting your experiment, you’ll see the cumulative exposures in the Results tab in the Statsig console, and how they have impacted the various scorecard metrics you set up in the Setup panel. Here’s a sample of what that looks like:

Next steps

In this tutorial, we useddaily_stickiness as the primary metric. This metric is automatically logged to Statsig for you, so you don’t need to log it explicitly.

If you want to measure other events or custom metrics that happen downstream after checking the experiment, you’ll need to log them to Statsig. Continue to the next tutorial to learn how to log custom events and metrics with the Statsig SDK.