In some cases, users in two experiment groups can have meaningfully different average behaviors before your experiment applies any intervention to them. If this difference is maintained after your experiment starts, it’s possible that experiment analysis will attribute that pre-existing difference to your intervention. This can make a result seem more or less “good” or “bad” than it really is. CUPED is helpful in addressing this bias, but can’t totally account for it. Additionally, some metrics like retention are not viable candidates for CUPED and can’t be easily adjusted. Statsig proactively measures the pre-experiment values of all scorecard metrics for all experiment groups, and determines if the values are significantly different and could cause misinterpretations. If bias is detected, users are notified and a warning is placed on relevant Pulse results.Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

How it works

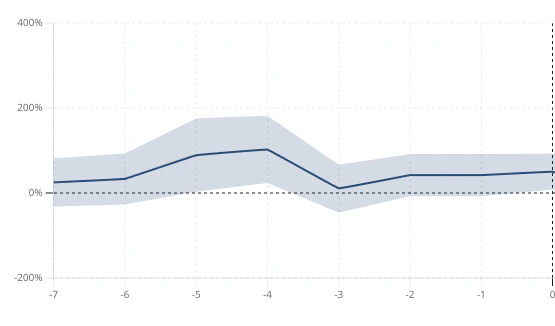

Statsig provides a “Days Since Exposure” view to help identify novelty effects and existing pre-experiment effects. For example, the test group of the experiment below had a consistently higher mean than the control group in the week before exposure for this metric

What to Do

Pre-experiment bias can occur by chance and is not always a major issue.- If the total delta is small, it may not meaningfully influence your interpretation of results

- If CUPED can account for the bias, then the bias should not impact your results