This guide introduces the steps for transitioning from a proof of concept (POC) or limited test on Statsig to a full-scale production deployment.Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

1. Check Project Name/Structure

- Rename the project if needed: Most teams continue using the project created during the trial, but it’s a good time to rename it if necessary.

- Decide if you need multiple projects: Tools like tags and granular permissions allow you to manage multiple teams within a single project. Create a new project only if you’re working on a completely separate product where the definition of a user (and DAU) is distinct, and no metrics need to be shared across projects. To avoid accidental creation of projects, you can restrict this to Admins.

- Seed your project: Set up tags to use consistently across feature gates, experiments, and metrics.

- Create Segments: Identify internal employees or testers by creating Segments so you can easily override them into feature rollouts and experiments.

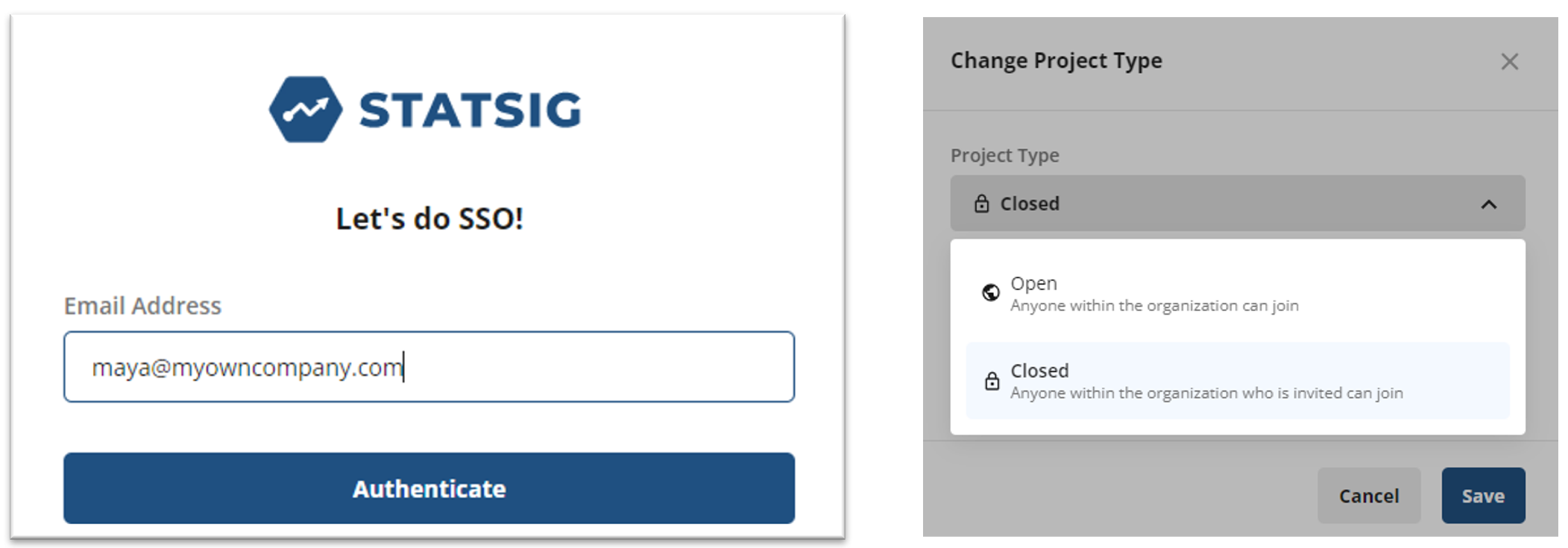

2. Set Up SSO for Easy Onboarding

To streamline user onboarding and deactivation, set up Single Sign-On (SSO) with your identity provider. Statsig supports OIDC, a modern protocol compatible with most identity providers. Once SSO is set up, employees verified by your identity provider will automatically have Statsig accounts created upon their first login. You can also make your project Open so that new users verified by SSO automatically gain access, or keep it Closed and manually approve new access requests.

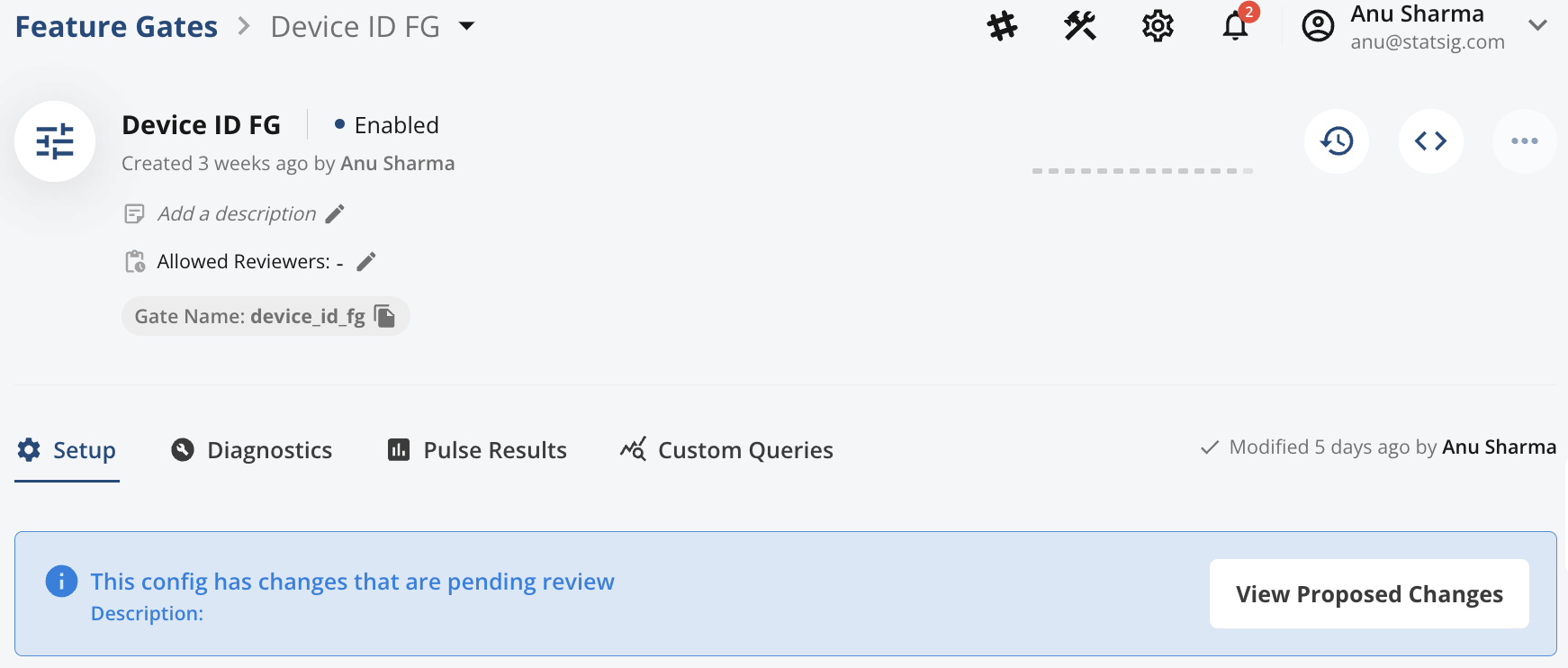

3. Define Change Management Policies for Production

To ensure safe production changes, Statsig allows you to require reviews before implementing changes. This is similar to requiring code reviews for software development. You can create Review Groups to notify key stakeholders and set up granular permissions for specific feature gates and experiments. For non-production environments like dev or staging, follow our recommended approach to manage environments effectively.

4. Set Up Key Metrics and Dimensions

Before starting experiments, ensure that the metrics and dimensions you want to experiment on are correctly set up. Consider the following:- Custom Metrics: Build custom metrics from existing ones (e.g., aggregations, ratios).

- User Dimensions: Enable slicing results by Geo, User, etc.

- Metric Dimensions: For example, analyzing “add-to-cart” events by Product Category.

- Funnels: Create funnels to track user journeys.

- Tag Metrics: Group frequently used metrics for easy experiment integration.

5. Determine IDs for Experimentation

Most Statsig experiments and feature rollouts target users by userID, but Statsig also allows experimentation using other “units of assignment.” Here are your options:- userID: Identifies individual users.

- stableID: A Statsig SDK-managed ID, typically used to experiment on anonymous users (e.g., unique to devices).

- Custom IDs: You can create arbitrary IDs based on your use case, such as:

- organizationID for B2B customers

- vehicleID for controlling features in vehicles

- creatorID for two-sided marketplaces, controlling features for creators vs. consumers

6. Set Up Integrations

If you haven’t already, explore the integrations available to bring data into Statsig. Additionally, consider setting up these integrations:- Data Warehouse Export: Export assignment data from Statsig to your data warehouse.

- Change History Exports: Export change history to Slack, Teams, or Datadog to overlay with operational dashboards.

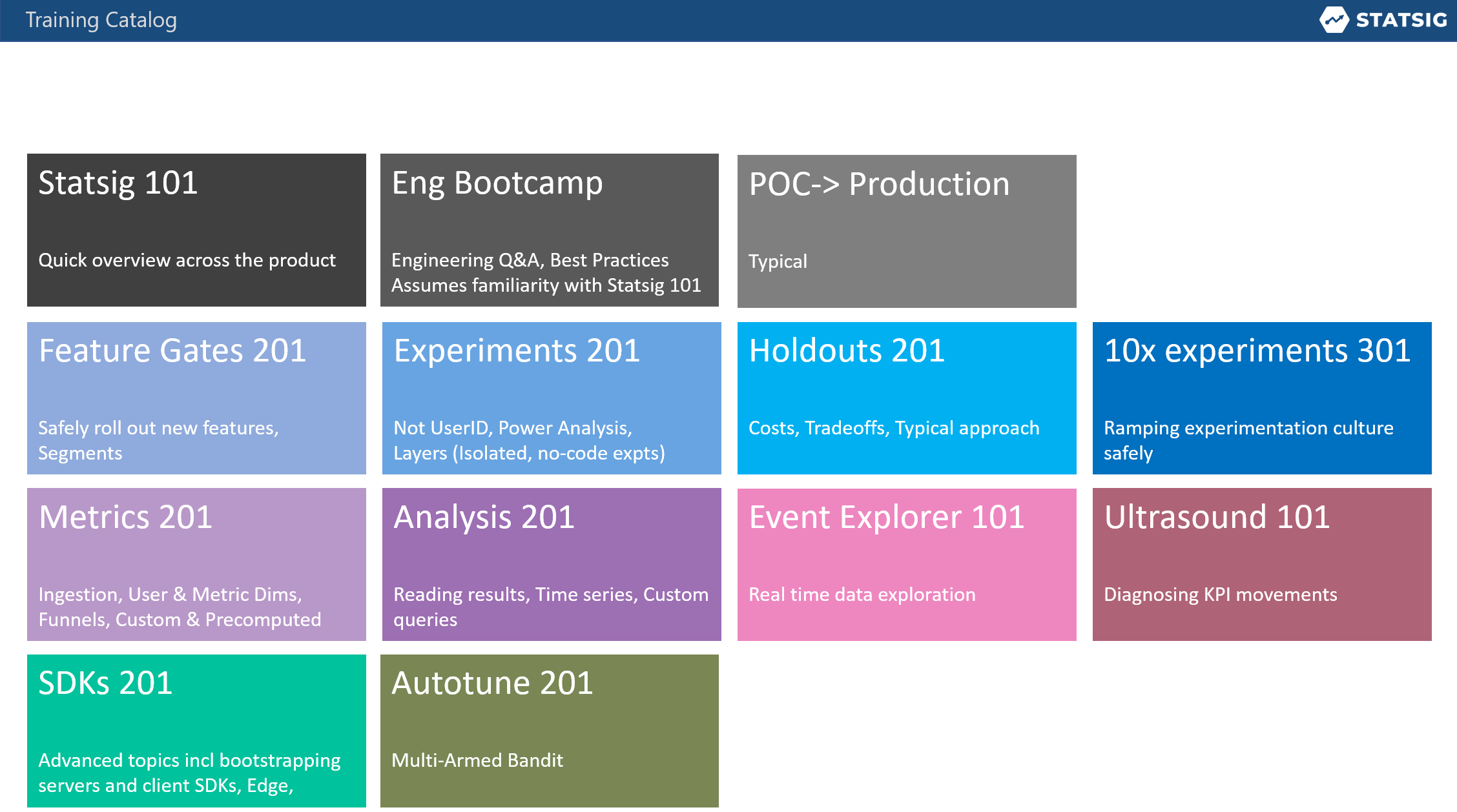

7. Best Practices, Forums, and Training

Create a repository for best practices and a forum for experimenters to share insights, get feedback, and seek help. Provide guidance to your teams on when to use Feature Gates versus Experiments. If you’re on Statsig’s Enterprise Support, consider organizing specific training for subject matter experts (SMEs):- Start with train-the-trainer sessions, allowing SMEs to drill into key topics.

- Use these sessions to create tailored guidelines for your teams.

- Switch to using the Slack Support channel with an SLA for faster assistance.