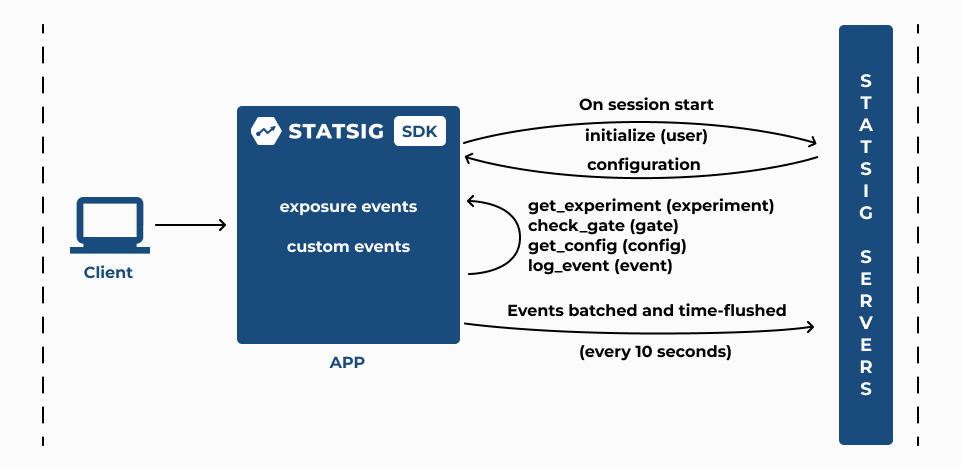

- Initialization

- Checking an Experiment

- Logging Custom Events

1. Initialization

-

The client SDK’s

initializecall takes the client SDK key and a StatsigUser object. First, it checks for cached values from a previous initialize in local storage, and then it makes a network request to Statsig servers; this network call fetches precomputed configuration parameters for the specified user from Statsig servers and stores these parameters in local storage on the client device. If the request fails, the previous cached values are used. -

Statsig’s server latency to service

initializecalls is generally 10ms (p50); the latency for given client may vary depending on how far the device is from Statsig’s servers; the client SDK has a built-in timeout of 3 seconds that you can configure using StatsigOptions when you initialize the SDK -

The StatsigUser object that you provide in the

initializecall should include the user identifier, userID, that you use to identify the end-user of your application; the client SDK also generates a device identifier called stableID to enable experiments where users aren’t signed in and a userID is not available; you can choose to override this stableID through StatsigOptions using the overrideStableID parameter when you initialize the SDK

2. Checking an Experiment

-

After the

initializecall completes, any subsequentgetExperimentcall synchronously returns the variant that your end-user is assigned to (you define these parameters when you set up an experiment on the Statsig console); use the returnedconfigobject togetthe value of the parameters required to implement the experiment variant -

If your experiment is part of a Layer, the

getLayercall returns the variant that the your end-user is assigned to; use the returnedlayerobject togetthe value of the parameters required to implement the experiment variant -

The

checkGatecalls returns true if the end-user passes the feature gate rules that you’ve configured on the Statsig console, and false otherwise -

The

getConfigcall returns the dynamic configuration based on the rules that you’ve configured to target your end-users; use the returnedconfigobject togetthe value of the parameters required to serve a dynamically configured app experience -

All of the above (

checkGate,getExperiment,getLayer, andgetConfig) log an exposure at the time the check is made, before returning a value to the caller. - The client SDK automatically flushes all accumulated exposure checks and events to the Statsig servers every 10 seconds

-

You can also force this flush by calling

shutdownto push these accumulated exposure checks to Statsig servers; theshutdowncall resets the state of the SDK and you should call it when the application is about to exit - You can verify that exposure events are being recorded in Statsig servers by checking the live Exposure Stream in the Statsig console under the Diagnostics tab of your experiment

- The Users and Checks charts in the Diagnostics tab are updated hourly; the Metrics Lift panel in experiment Results is updated daily around 9am PST.

Updating Experiment Configuration

- The experiment configuration received from Statsig servers in response to the

initializecall persists until you either make anotherinitializecall (recommended when the end-user starts a new session) or anupdateUsercall (recommended when an end-user attribute changes). These calls trigger a revaluation of all experiment configuration for the end-user.

Using StatsigUserLearn how to use StatsigUser while using a client SDK.Setting Default Parameter ValuesStatsig recommends including a default value when you make the

get call to fetch a parameter value from the returned config object. This will ensure that your application code falls back to the default value for a parameter that’s not yet been configured in an experiment, layer, or dynamic config in the Statsig console. For convenience, Statsig by default returns false when you check a gate that has not yet been configured.You can test your experiment in development or staging environments by setting the environment tier in the

initialize call. When you do this, you can verify that your exposure checks are working by switching the toggle to Show non-production logs in the Exposure Stream under the Diagnostics tab for your experiment in the Statsig console. By default, the Exposure Stream shows exposure checks logged in production environments.When you’re testing in development or staging environments, you can target specific members in your team to see a specific variant by adding these members to the override list of a rule or variant group using the Manage Overrides option.A word on cached values

If the initialize request fails, the SDK will fall back to values cached for the user. The cache key for those values is important - because values related to the session can change for everyinitialize call, if we include the full user object as the cache key, we may never match against cached values.

We define the “user” to cache based on the set of all ids - the userID and all customIDs. This means that if another field changes in the user object, we will still load the cached values as long as the IDs are the same.

If you have critical fields which define a user uniquely and are not in the userID or customIDs field, you can override the cache key - check the documentation for your specific sdk. At the moment, this is only available in javascript based sdks (js, react, react native, expo), and android/ios native sdks.

3. Logging Custom Events

-

The client SDK’s

logEventcall takes a custom event that you want to record to analyze the impact of the experiment on your end-user experience - The client SDK automatically flushes all accumulated logged custom events to the Statsig servers every 10 seconds

- Statsig uses these custom events to compute metrics as part of your experiment Results; Statsig automatically updates the Metrics Lift panel in the experiment Results tab daily around 9am PST

Custom event logging can sometimes be blocked by third-party plugins. As a workaround, you can set up a custom proxy using your domain for log event API calls. For more information, refer to Custom Proxy for Statsig API.