Email campaigns are a critical tool for any Marketing team. Finding the best performing Email template is a perfect use-case for an A/B test. Statsig allows you to run simple but powerful A/B tests on different parts of your email content. Since Statsig can integrate seamlessly with product analytics, you can run email experiments and understand deeper business level impact easily.Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

This guide assumes you have an existing Statsig account. Please go here to create a new free account if you don’t already have one: https://statsig.com/signup

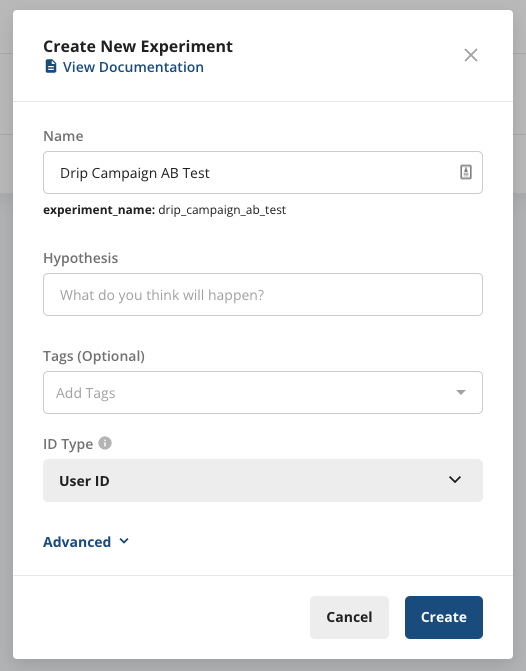

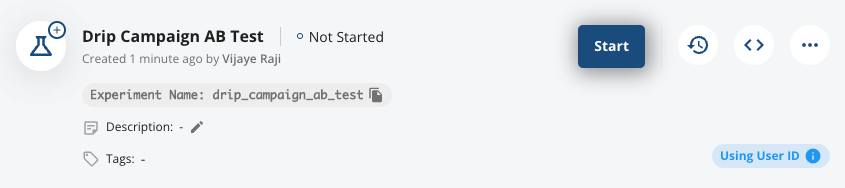

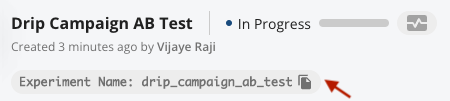

Step 1: Create an experiment

Start by creating a new Experiment on Statsig console. Put in a name and leave the rest of the fields empty/default. For the purposes of this walkthrough, that should do.

Step 2: Start the experiment

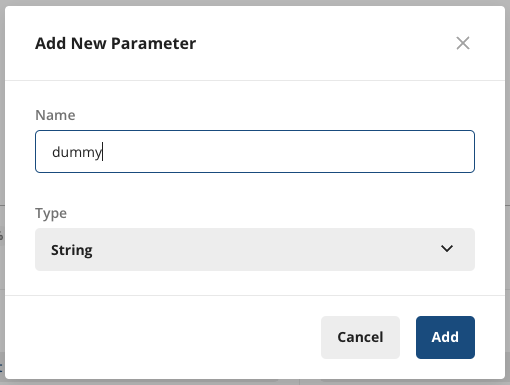

Since you can’t start an experiment without a parameter, let’s go ahead and add a dummy parameter.

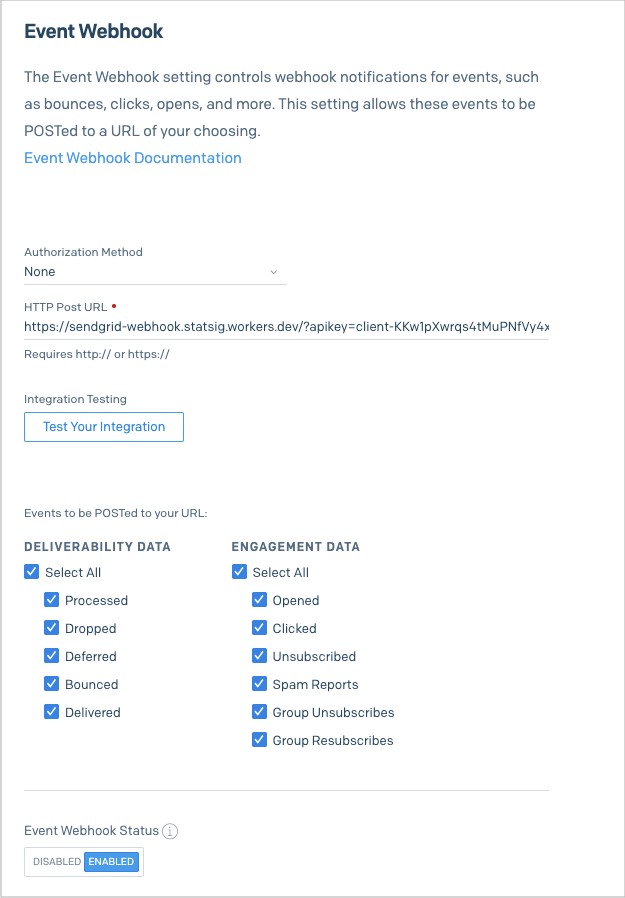

Step 3: Set up Webhook

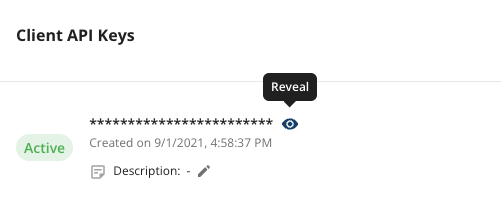

In your SendGrid console, go to Settings -> Mail Settings -> Event Webhook. In the HTTP Post URL, put in:https://sendgrid-webhook.statsig.workers.dev/?apikey=[YOUR STATSIG API KEY]

You can find your API Key by navigating to Statsig Project Settings -> API Keys, and copying the ‘Client API Key’.

client-abcd123efg...

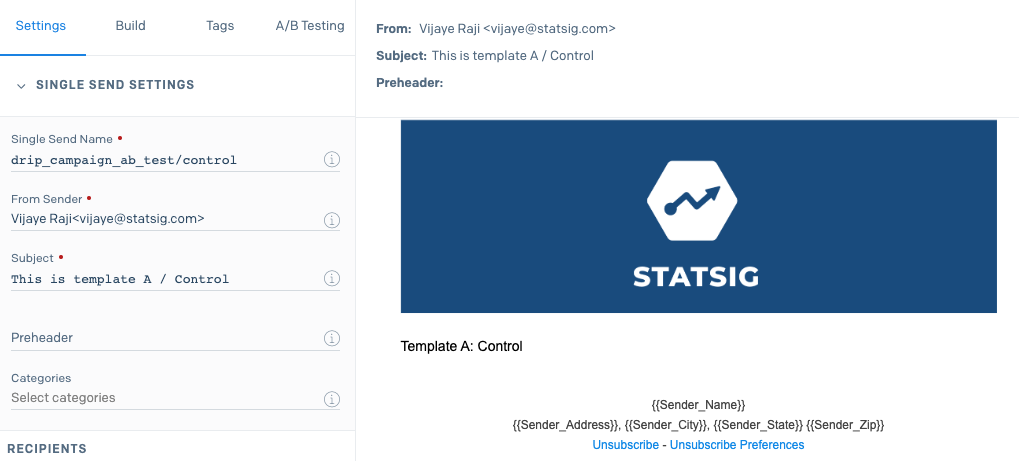

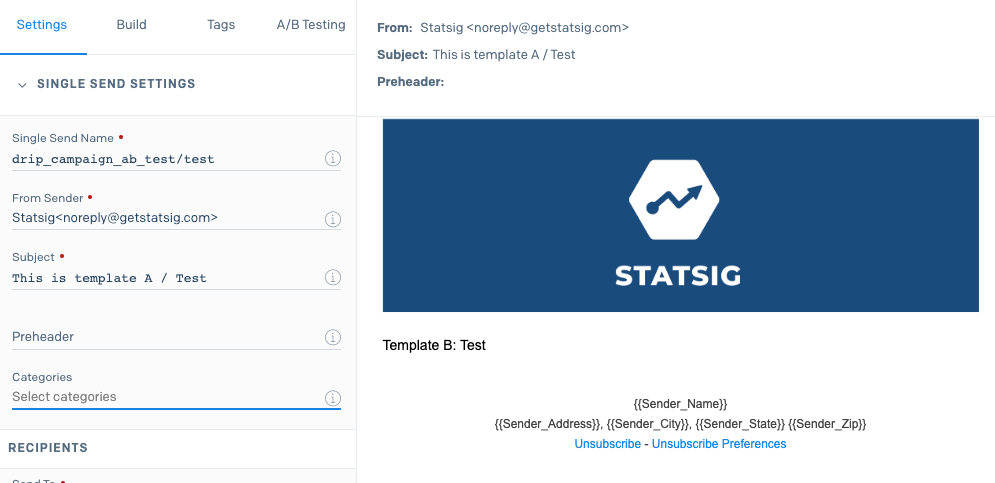

Step 4: Create Single Sends

Now on your SendGrid app, create two new Single Send and name them using the experiment-name like this. The first one would be the “Control”, which is the baseline. That one should be named[experiment_name]/control. For example, in our case it will be drip_campaign_ab_test/control.

[experiment_name]/test. For example, in our case it will be drip_campaign_ab_test/test.

In order to avoid introducing any bias, it is best to split the recipient list at random. For instance, you want to ensure recipients within the same company are distributed evenly between the two lists.

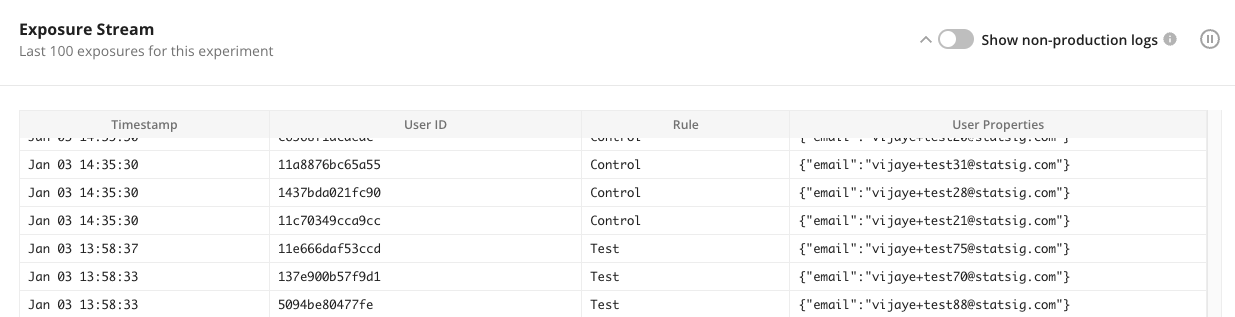

Monitoring the set up

When you’ve started the sends, you can verify everything is working as expected by navigating to the Diagnostics Tab in your experiment and looking at the ‘Exposure Stream’ at the bottom of the page. This shows a realtime stream of the page loads along with the variant they were allocated.

Interpreting results

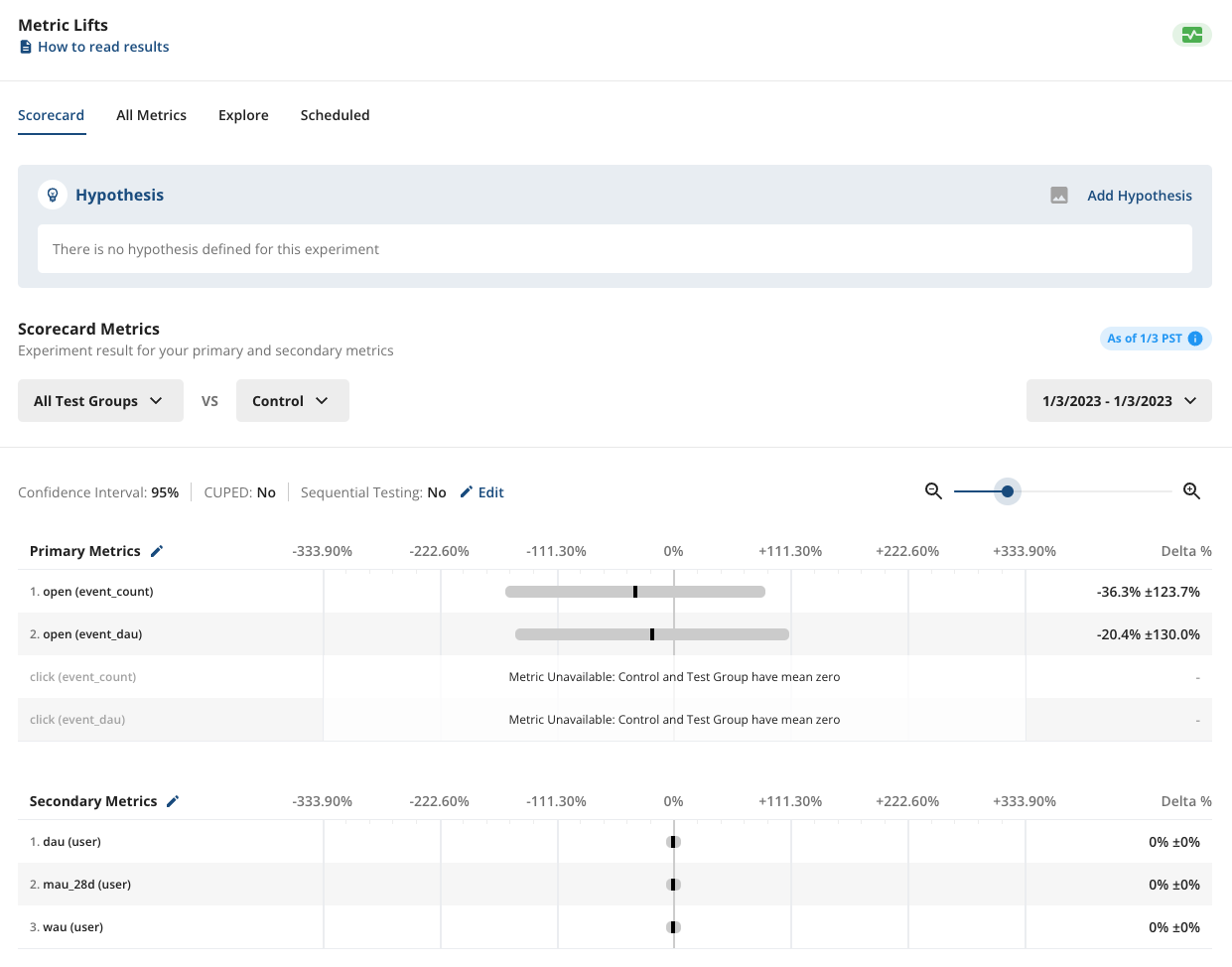

By going to the Pulse Results tab in the Experiment page, you can add metrics you want to monitor and verify which variant is doing better. To learn more about reading Pulse Results, check this article out: Reading Pulse Results.

Using API instead of Single Send

Statsig also supports A/B testing when using API or Automation to send marketing emails. In order to enable this, you would use unique arguments (https://docs.sendgrid.com/for-developers/sending-email/unique-arguments) and pass in unique_args as below:More than two variants

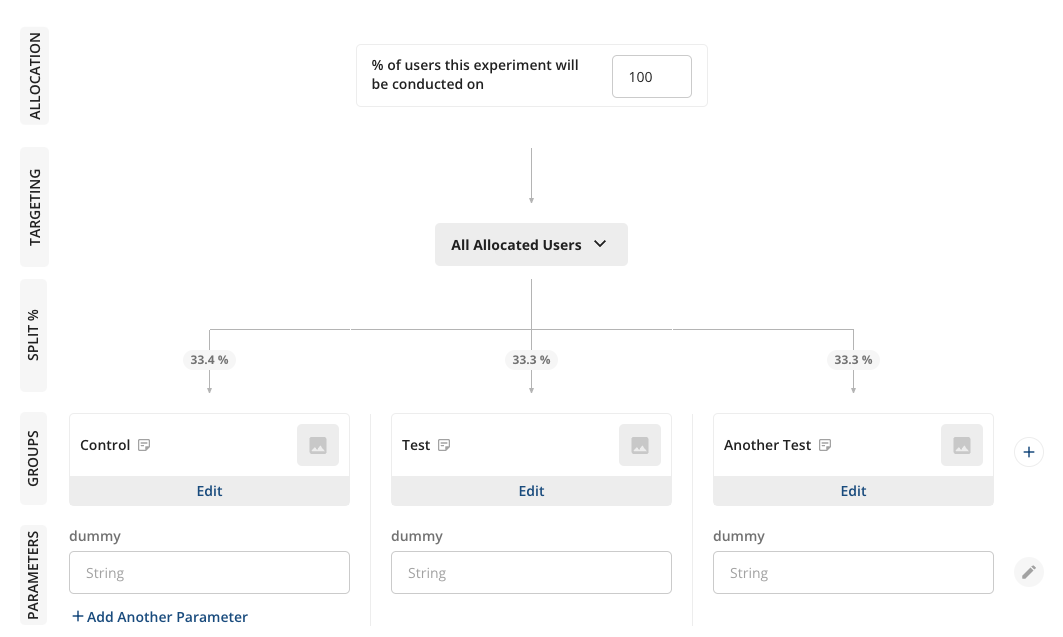

It’s simple to extend this setup to run ABC or ABn tests. You can add more variants in the Experiment Setup tab, like below. Make sure the variant name is correctly applied in either the Single Send name or the unique arguments in the API.