Documentation Index

Fetch the complete documentation index at: https://docs.statsig.com/llms.txt

Use this file to discover all available pages before exploring further.

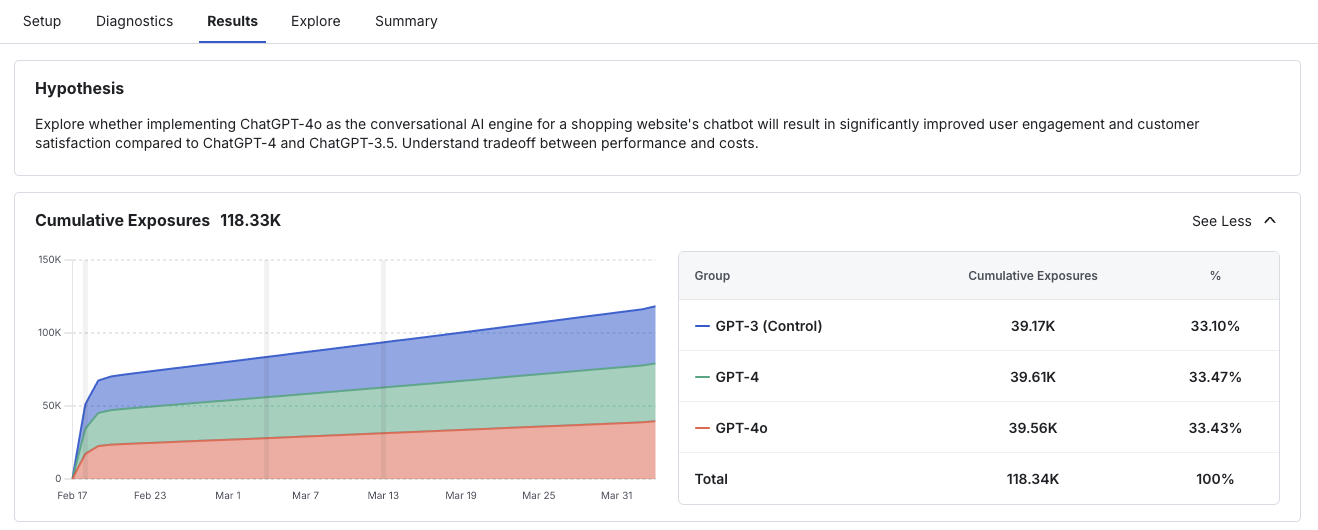

Read Experiment Results

To read the results of your experiment, go to the Results tab, where you will see your experiment hypothesis, exposures, and Scorecard.Exposures

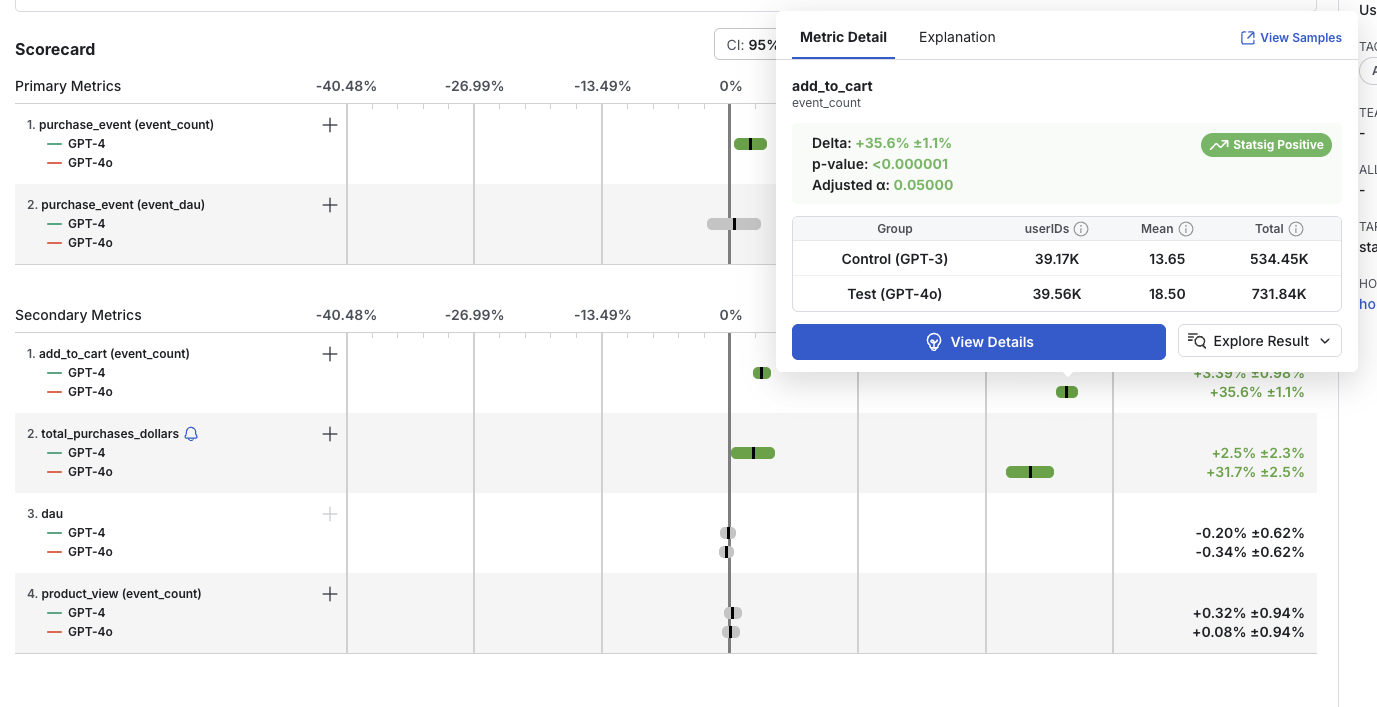

Scorecard

The experiment Scorecard shows the metric lifts for all Primary and Secondary metrics you set up at experiment creation.Immediately Post-experiment Start

For up to the first 24 hours after starting your experiment (before our daily metric results run), the Scorecard section is calculated hourly (this only applies to Statsig Cloud, for WHN projects you will need to reload results on demand or set up a daily schedule). This more real-time scorecard is designed to enable you to confirm that exposures and metrics are being calculated as expected and debug your experiment or gate setup if needed.You should not make any experiment decisions based on real-time results data in this first 24 hour window after experiment start. Experiments should only be called once the experiment has hit target duration, as set by your primary metric(s) hitting experimental power.

Read more about target duration here.

- Metric lifts do not have confidence intervals

- No time-series view of metric trends

- No projected topline impact analysis

- No option to apply more advanced statistical tactics, such as CUPED or Sequential Testing

Post-first Day Scorecard

- The calculated relative difference (Delta %)

- The confidence interval

- Whether the result is statistically significant

- Positive lifts are green

- Negative lifts are red

- Non-significant results are grey

- Experiment results are computed for the first 90 days: By default, Statsig will compute experiment results for your experiment for only the first 90 days of your experiment. You will be notified via e-mail as you approach the 90 days cap, at which point will be able to extend this compute window for another 30 days at a time. If the experiment runs beyond the compute window, new users will stop getting added into the experiment’s result, but analysis for existing users who have been exposed to the experiment will continue to run even if the compute window is not extended, until you make a decision on the experiment.

This experiment result calculation window only affects whether a user is included in the experiment’s analysis, and does not affect the treatment each user would receive. New users would still receive the experience for the group they get randomized into.

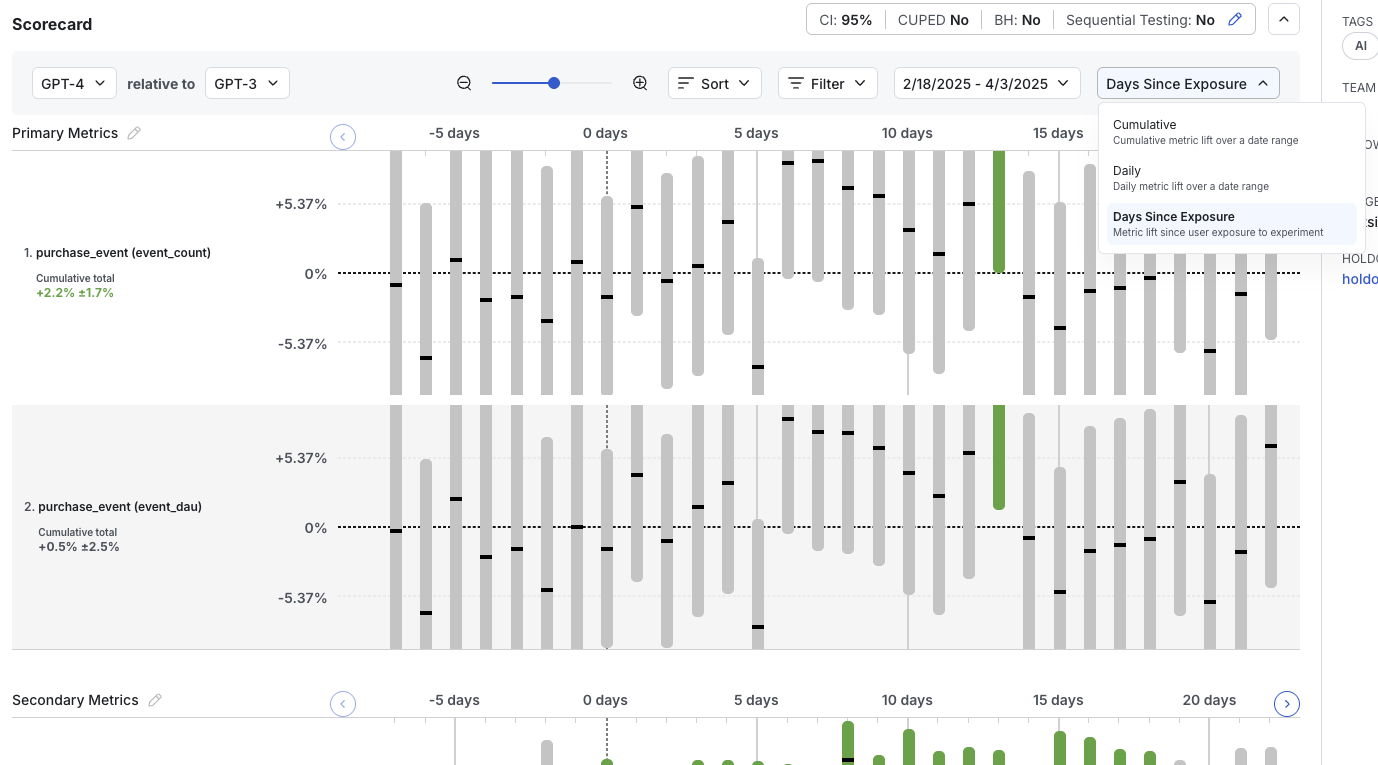

Experiment Results Views

There are a few different views to see your Scorecard metric lifts, namely:- Cumulative results (default view): Displays the aggregate difference between experiment groups and visualizes the corresponding confidence intervals

- Table view: Displays the same data as the cumulative view but in a table format with additional fields

- Daily results: Shows the difference between experiment groups aggregated based on days since start of experiment

- Days since exposure: Shows the difference between experiment groups aggregated based on days since exposure to the experiment

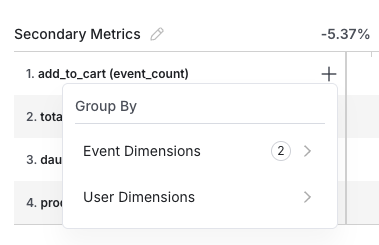

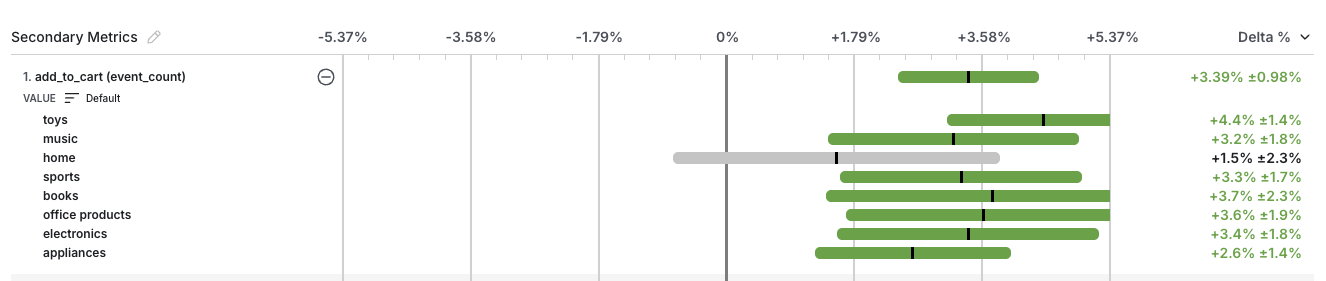

Dimensions

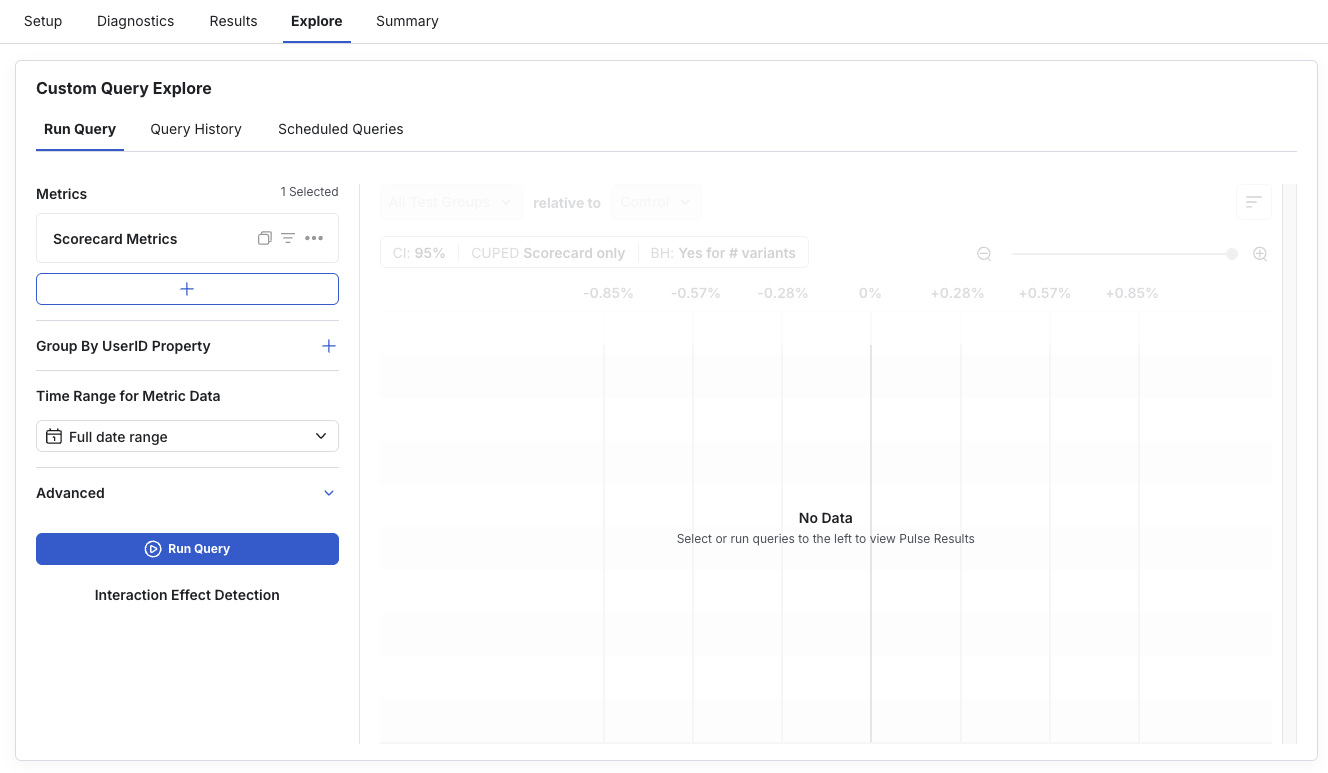

There are two ways in which we can breakdown a given Scorecard metric - one is by a User Dimension, the other is by an Event Dimension.User Dimensions

User Dimensions refer to user level attributes that are either part of the user object you log, or additional metadata that Statsig extracts. Examples of these user attributes could be operating system, country, and region. You can create custom “explore” queries to filter on or group by available user dimensions. For example, you could “See results for users in the US”, or “See results for users using iOS, grouped by their country”. Go to the “explore” tab to draft a custom query

Event dimensions

Events Dimensions refer to the value or metadata logged as part of a custom event that is used to define the metric. If you want to see results for a metric broken down by categories that are specific to that metric, specify the dimension you want to break down by in the value or metadata attributes when you log the source event. For example, when you log a “click” event on your web or mobile application, you may also log the target category using the value attribute as shown below. Statsig will automatically generate results for each category in addition to the top level metric. To see breakdowns for all categories within a metric, click on the (+) sign next to the metric.

Significance Level Settings

These settings can be adjusted at any time to view Scorecard results with different significance levels.- Apply Benjamini-Hochberg Procedure per Variant: Select this option to apply the procedure to reduce the probability of false positives by adjusting the significance level for multiple comparisons - read more here.

- Confidence Interval: Changes the confidence interval displayed with the metric deltas. Choose lower confidence intervals (e.g.: 80%) when there’s higher tolerance for false positives and fast iteration with directional results is preferred over longer/larger experiments with increased certainty.

- CUPED: Toggle CUPED on/ off via the inline settings above the metric lifts. Note that this setting can only be toggled for Scorecard metrics, as CUPED is not applied to non-Scorecard metrics.

- Sequential Testing: Applies a correction to the calculate p-values and confidence intervals to reduce false positive rates when evaluating results before the target completion date of the experiment. This helps mitigate the increased false positive rate associated with the “peeking problem”. Toggle Sequential Testing on/ off via the inline settings above the metric lifts. Note that this setting is available only for experiments with a set target duration.

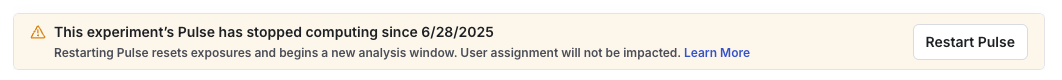

Restarting Results

- A Restart is not a Reset of your experiment. A Restart will not re-salt (i.e. re-randomize) units in your experiment, and all users will continue to receive the same group assignments.

- Statsig will begin computing experiment results anew from the restart point, so your metric results will start over. Old results may still be available in timeseries and explore query views, but they will not be carried forward or updated.

- Your Cumulative Exposures chart will update based on new exposures, but the duration of the pause in computations will affect if the chart starts over from zero or your exposure count includes past exposures.